QUBIC BLOG POST

Can an AI Have Experience? Lerchner and the Abstraction Fallacy

Written by

Qubic Scientific Team

Published:

May 9, 2026

Listen to this blog post

Commentary on the article by Alexander Lerchner (Google DeepMind, 2026)

By the Qubic Scientific Team

The Question the AI Industry Prefers Not to Formulate Properly

When one listens to AI researchers discussing the possibility that current models or their successors may be conscious, one usually hears solid technical arguments about emergent capabilities and philosophical claims that are introduced as if they were logical consequences of the data. There is no shortage of "quantum", "pseudo-religious" perspectives in which a new galactic Demiurge will emerge from mere computation.

It is sometimes taken for granted that if the model describes its internal states, if it responds coherently to moral dilemmas, if it modulates its tone according to the interlocutor, then there are good reasons to believe that it "feels".

The idea rests on an assumption that is not examined carefully. The recent work of Alexander Lerchner, a researcher at Google DeepMind, argues that this assumption is not an unimportant metaphysical detail, but a specific, identifiable, and demonstrable categorial error.

He calls it the abstraction fallacy, and his argument deserves attention because it does not appeal to biology nor rely on intuitions, as the classical critics of computational functionalism did. Lerchner attacks the problem from a less trodden path: he asks what, physically, a computation is, and where that question leads when answered rigorously.

Lerchner first reminds us of the biologicist positions (Seth, Block) that link conscious experience to living processes: homeostatic regulation, autopoiesis, hormonal-neural integration. Also the reductio ad absurdum arguments in Searle's line, which show that functionally equivalent systems can lack genuine semantic understanding (the Chinese room). Lerchner expands this view by locating the error. And he locates it not on the side of consciousness (which remains a mystery), but on the side of computation (which we thought we understood well).

What Is a Computation, Really? The Ontology Behind AI Consciousness

Imagine any electronic device. Its components obey the laws of electromagnetism. Charges move, voltages fluctuate, capacitors charge and discharge. All this dynamic is perfectly describable in physical terms: there is a continuous trajectory of the system in its state space, governed by differential equations.

Lerchner's question is disarming: what does this device compute?

The honest answer, looking only at the physics, is: nothing in particular. The same trajectory of voltages can be read as a sequence of bits encoding a text, as a digitized audio signal, as a numerical matrix representing an image, or as thermal noise.

Physics does not privilege any interpretation over the others. For the device to compute something, someone must have decided that certain voltage ranges count as "0", others as "1", and that the succession of those symbols means this and not that.

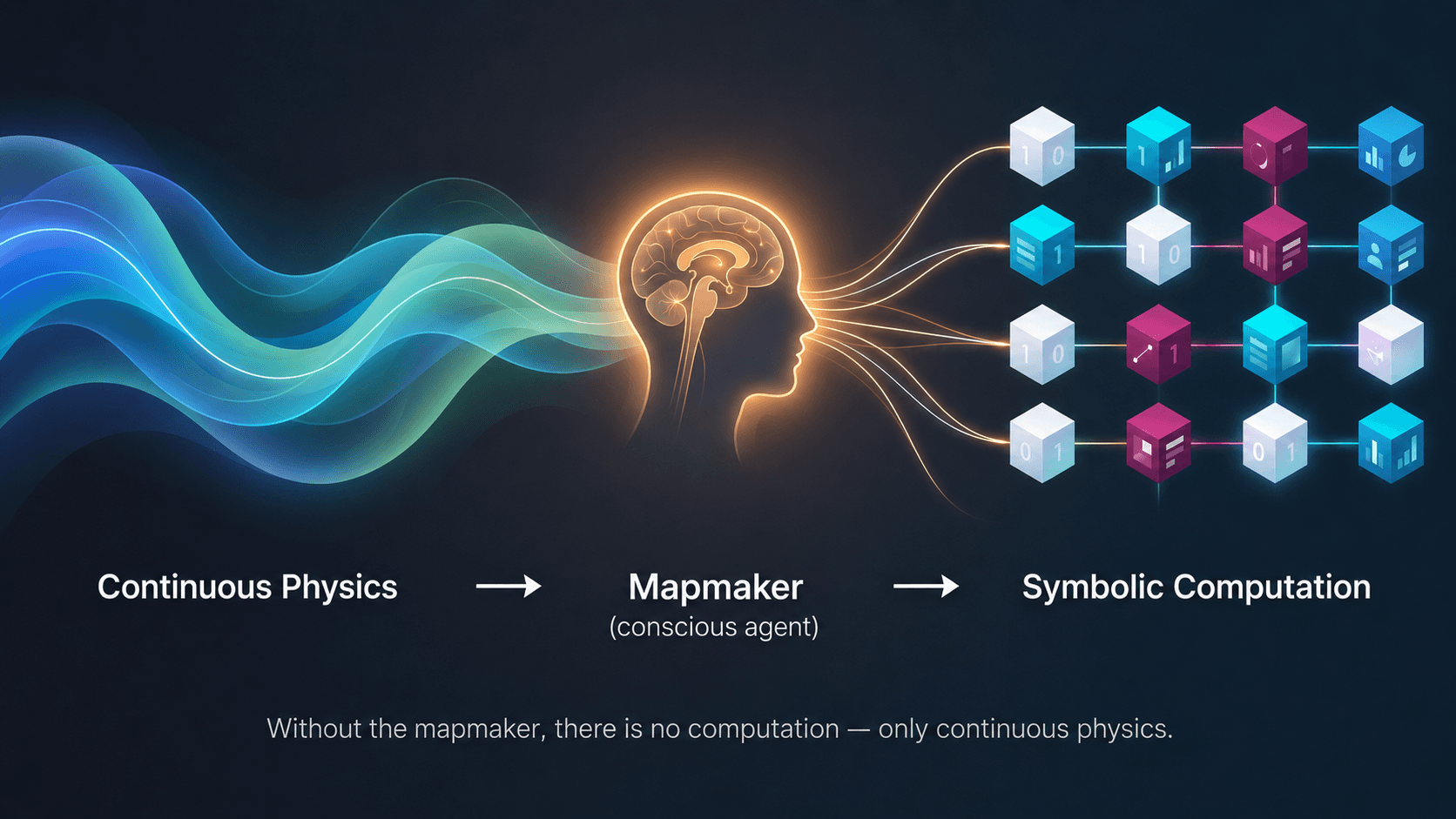

This decision is the heart of the matter. Lerchner introduces a term to name the one who makes it: mapmaker. Computation, Lerchner argues, is not an intrinsic property of physical systems, but a relation that a cognitive agent establishes between a physical substrate and a symbolic domain. Without that agent, there is no computation; there is only continuous physics.

When a functionalist speaks of the logical state "red" or "pain" as a term in a causal network of functional relations, he is treating those concepts as abstract entities, without worrying about where they come from or what constitutes them.

Lerchner repairs this omission. A concept, he argues, is not a Platonic object floating in an abstract space. It is the physical, costly, and specific result of an operation performed by a living system: extracting invariant structure from continuous experience. Recognizing that this in front of me, that other thing I saw yesterday, and that one I remember from months ago share a property —their redness— requires that something in the organism do the work of filtering variability and isolating the relevant regularity. That work costs energy, occurs in living tissue, and is inscribed in the physiology of the subject.

Why Machine Learning Pattern Detection Is Not Concept Formation

The objection arises here: don't unsupervised learning algorithms do exactly this? An autoencoder, a clustering system, detect latent structure in their data and compress it into lower-dimensional representations. Wouldn't these systems be forming concepts in the way a brain does?

Lerchner's answer is precise and worth retaining: detecting a statistical pattern is different from possessing a concept. A centroid in a latent space is a compressed direction, a label that a mapmaker (the engineer who designed the system, or the team that interprets its outputs) can use to refer to a group of data.

But the system itself does not have a concept there in the strong sense: there is nothing on the system's side that is experienced red serving as a common denominator for its instances. There is only a region of high density in a vector space of numbers. The difference between the two things is the difference between describing and living, and flattening it amounts to confusing the map with the territory before even beginning to discuss. The word apple contains nothing of its taste.

Galileo, Descartes, and the Blind Spot of Modern Science

Lerchner places this difficulty in a historical genealogy. The scientific revolution advanced, since Galileo, through a deliberate division: the quantifiable properties of the world (extension, mass, motion) were real and a legitimate object of science; the qualities apparently linked to the subject (color, taste, perceived temperature) were projections of the observer and had to be left out of the scientific picture. This operation was extraordinarily productive: it enabled modern physics, chemistry, quantitative biology, the entire edifice that brought us here.

But it had a cost. If subjectivity was expelled from scientific ontology from the beginning, trying to reintroduce it through the back door – to build it with the very materials that were selected precisely because they excluded it – becomes a structurally strange task. Descartes attempted something like this with his dualism, and no one has known what to do with the pineal gland since then.

Simulation Versus Instantiation: The Core of the AI Consciousness Debate

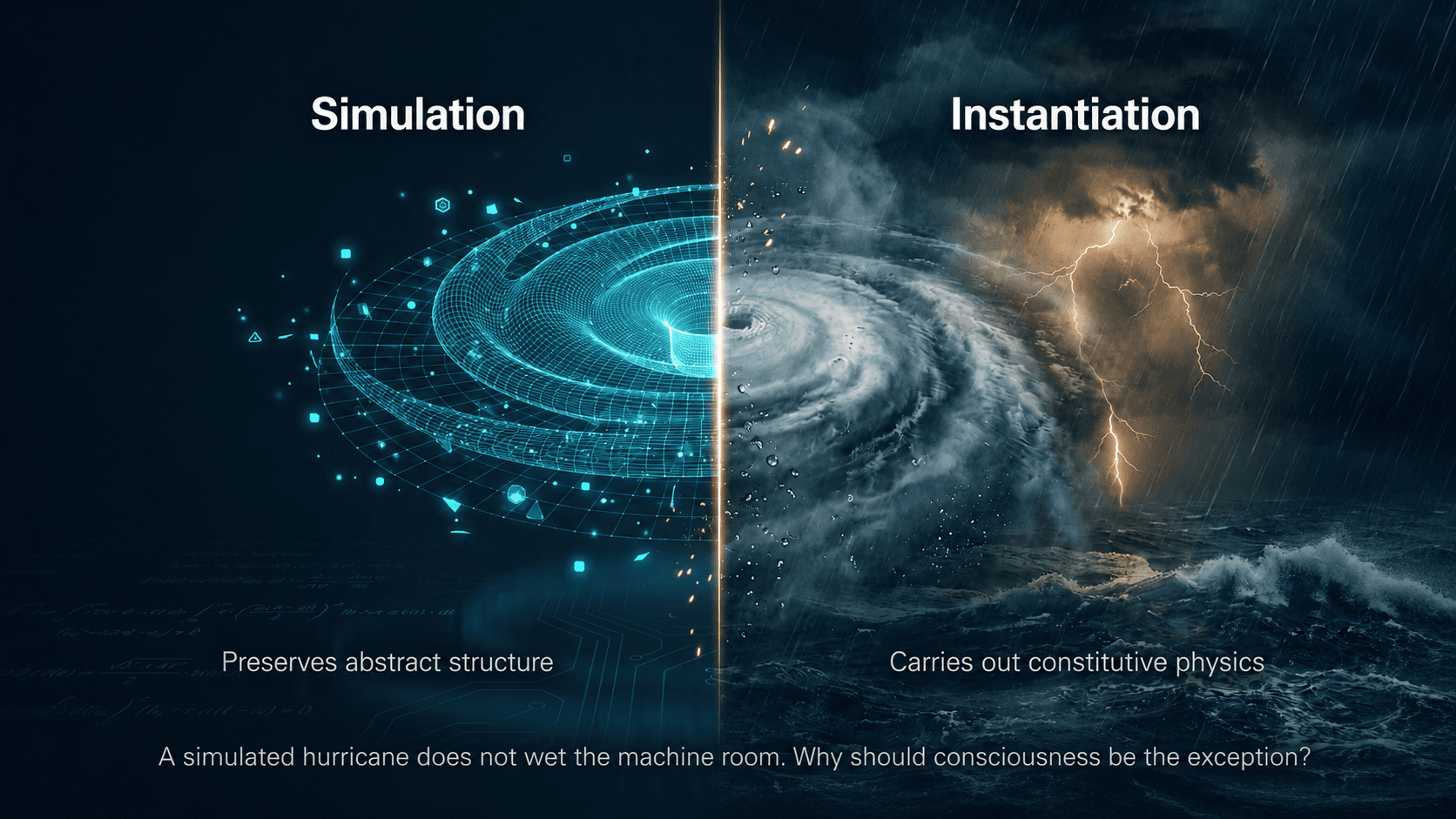

On this basis, Lerchner formulates the distinction that gives the article its title. There are two distinct operations that ordinary language tends to merge:

Simulating a process is to manipulate symbols so that the symbolic trajectory preserves the abstract structure of the process. A meteorological model simulates the atmosphere; a chess program simulates games; a neural network simulates object recognition. The success of the simulation is measured by its structural fidelity to what it tracks.

Instantiating a process is to carry it out: to set in motion its constitutive physical dynamics. A hurricane instantiates an atmospheric system; a real game instantiates a game; a biological visual system instantiates object recognition. Instantiation does not represent a process from outside; it carries it out from within.

Almost no one doubts the distinction applied to a hurricane: simulating a hurricane in a supercomputer does not wet the machine room, however extraordinarily precise the simulation may be. The simulation of combustion does not heat the room; the simulation of digestion does not metabolize nutrients. Lerchner's question is why we should suppose that consciousness is the only exception: the only process for which simulation would coincide with instantiation.

The usual functionalist defense appeals to the idea that consciousness, unlike hurricanes, "is" information, and therefore independent of its physical realization. But this defense, Lerchner observes, presupposes exactly what it needed to demonstrate: that there exists an abstract descriptive level where the mind resides, and that this level is causally efficacious in itself. If information only exists relative to an alphabet, and alphabets are cuts made by a mapmaker on a continuum, then information cannot be an ontologically autonomous level. It is derived.

Why Computational Functionalism Is Structurally Circular

The causal chain that computational functionalism implicitly assumes is linear and goes from the bottom up:

Physics → Computation → Consciousness

Physics enables computation; computation, upon reaching sufficient complexity, gives rise to consciousness. But if computation requires a mapmaker —a cognitive agent capable of cutting the continuum into symbols and maintaining the correspondence between them and the world— then the mapmaker is a prerequisite, not a product, of computation. And a mapmaker is already a subject who experiences. The correct chain is inverted in its critical segment:

Physics → Consciousness → Concepts → Computation

The consequence is conclusive: if consciousness is what allows computation to exist, it cannot in turn emerge from it. The functionalist argument presupposes its conclusion. And no increase in syntactic complexity can save this circle, because the circle is structural, not quantitative. A calculator with a thousand operations and one with a billion parameters are both on the same side of the problem: both are maps drawn by mapmakers who precede them.

Does Embodied AI Solve the Problem? Robots, Sensors, and the Limits of Grounding

An increasingly widespread response to this kind of argument consists in pointing out that current AI systems are less and less pure symbol manipulators: they have sensors, actuators, feedback loops with the physical world. Perhaps the abstraction fallacy applies to an isolated LLM, but not to an autonomous robot learning from its environment.

Lerchner acknowledges the partial weight of this reply. Yes, embodiment solves a classical problem, that of the referential anchoring of symbols: a system with a body and sensors can link its internal representations to data flows from the world instead of referring to other representations. But this solution, he observes, operates entirely on the side of the map. Transduction – the conversion between physical domains – does occur, yes, but the alphabetizer (human, external to the system) remains presupposed in the design.

A robot, however sophisticated its sensorimotor coupling, still executes a syntactic policy on symbols whose alphabet was fixed by its designers. Embodiment enriches the simulation, but does not turn it into instantiation.

What the Abstraction Fallacy Argument Does Not Claim

It is worth closing the exposition by avoiding a frequent misunderstanding. Lerchner's conclusion is not that consciousness necessarily requires carbon, neurons, or specific biochemical processes. His argument is finer and, in a way, more demanding: if one day we were to build an artificial system with experience, that experience would proceed from the specific physical constitution of the system – from its thermodynamic dynamics, its internal regulations, its material couplings – and not from its algorithmic architecture. It would be exactly the inverse of substrate independence: a radical dependence on it.

From this follows a practical consequence relevant to the debate on AI safety and welfare. The current large models, however impressive their capabilities, are not candidates for moral patients by virtue of their computational sophistication. The legitimate concern for their treatment should shift toward the real risks they generate, such as the ease with which they invite anthropomorphism, the effects of their massive use on human cognitive dynamics, and the economic and political dislocations they produce.

What Neuraxon Offers: Brain-Inspired AI Without the Consciousness Claim

The dominant landscape of contemporary AI – transformer architectures, parameter scaling, discrete training followed by static deployments – works admirably well for many tasks, but presents obvious shortcomings from the point of view of anyone familiar with living neural systems. These models do not operate in continuous time, do not maintain endogenous activity, do not integrate signals on multiple temporal scales, do not update their structure during use, do not contemplate functional analogues of neuromodulation. They are, in the article's terms, very high-resolution but static maps.

Neuraxon is a computational and simulation study that takes seriously a good part of these shortcomings. Its trinary logic introduces a neutral state alongside the excitatory and inhibitory states, capturing computationally something analogous to the modulatory tone that in biological systems is fulfilled by neuromodulators. Its synapses incorporate differentiated temporal scales that approximate the plurality of real neurochemical mechanisms. Processing is continuous, without the rigid division between training phase and deployment phase that characterizes classical models. There is sustained plasticity in the line of biological STDP, there is spontaneous activity, there is sensitivity to temporal context on different scales.

This is valuable as a modeling exercise, but it is worth resisting the temptation to take the next step. To say that Neuraxon approaches consciousness, or that experience could emerge from it, would be to fall exactly into the fallacy that Lerchner identifies.

Neuraxon, running on conventional digital hardware, operates on discrete symbols that have been alphabetized by its designers. Its dynamics are simulated, not instantiated. The substrate is still silicon executing code on preassigned alphabets.

What Neuraxon does propose, and where its genuine interest lies, is a different and much more manageable and humble question: which properties of living systems are productive to model in order to build more adaptive, efficient, and robust AI in dynamic environments. The working hypothesis is that static models are leaving capacity on the table, and that architectures closer to real neural dynamics could perform better in continuous-time control problems. It is an empirical, testable hypothesis, completely independent of any thesis about subjective experience.

Lerchner clarifies a conceptual problem about the limits of computation as description; Neuraxon explores which kinds of computational description are most useful for building better tools. Keeping this difference clear is probably the most respectful thing one can do.

The mapmaker cannot be built from inside its map, but it can devote itself to drawing ever better maps. That task, more modest and attainable, is the one that Neuraxon / Aigarth / Qubic proposes.

References

Lerchner, A. (2026). The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness. Google DeepMind. Available at PhilArchive and Google DeepMind Publications.

Seth, A. K. (2021). Being You: A New Science of Consciousness. Dutton. Author site

Searle, J. (1980). "Minds, Brains, and Programs." Behavioral and Brain Sciences, 3(3), 417–424. Wikipedia overview

Block, N. (1995). "On a Confusion About a Function of Consciousness." Behavioral and Brain Sciences, 18(2), 227–287.

McClelland, T. (2025). Study on AI consciousness and agnosticism. Mind and Language. University of Cambridge. Cambridge press release

Butlin, P., Long, R., et al. (2023). "Consciousness in Artificial Intelligence: Insights from the Science of Consciousness." arXiv preprint. Science coverage

Vivancos, D. & Sanchez, J. (2025). "From Perceptrons to Neuraxons: A New Neural Growth and Computation Blueprint." Qubic Science. ResearchGate | GitHub