QUBIC BLOG POST

Conscious Machines, Intelligent Organisms: The Science Behind AI Consciousness

Written by

Qubic Scientific Team

Published:

Apr 7, 2026

Listen to this blog post

When talking about AI, conversations quickly drift toward a very specific idea: feeling machines, thinking machines, machines that awaken. But these ideas entangle intelligence and consciousness into a confused mix.

Intelligence, as we explained in our first scientific paper, is the general ability to solve problems, adapt, make decisions, and learn. An intelligent system builds models of the environment and acts upon them. This capacity can be measured and formalized. In fact, both biological and artificial intelligence can be described as processes of inference and optimization under uncertainty (Sutton & Barto, 2018).

Consciousness, on the other hand, is not about what a system does, but about what it experiences. It relates to inner, private, subjective experience. As Thomas Nagel famously put it: “What is it like to be a bat?” (Nagel, 1974). Here lies the fundamental difference: intelligence can be observed from the outside, but consciousness is only accessible from within.

Popular culture has mixed both concepts. We imagine artificial general intelligence as something like Terminator, I, Robot or 2001: A Space Odyssey, often projecting deep human fears about technology, novelty, and the unknown. But the fear is not about systems solving problems better than us. That scenario already exists and does not generate real concern. Think of AlphaGo surpassing human champions in Go, AlphaFold accelerating protein discovery, or models like GPT-4 and Claude generating text, code, and algorithms at levels comparable to, or beyond their creators.

Fear appears when these systems seem to exhibit agency, intention, or something resembling self-will. In other words, when they appear to have some form of machine consciousness.

This distinction is central in cognitive science. Systems that process information are fundamentally different from systems that access information in a globally integrated way (Dehaene, Kerszberg, & Changeux, 1998).

AI Consciousness and Science: Beyond the Hard Problem

Despite the current hype around “quantum”, religious, or pseudoscientific explanations of consciousness, science provides a more grounded path. There is a well-known “hard problem of consciousness,” as Chalmers formulated more than two decades ago: we still do not understand how a physical nervous system generates subjective experience.

Put simply: we know how neurons activate to encode the blue of the sky or the smell of sandalwood. But we do not understand how these neural activations produce the experience of seeing blue or smelling sandalwood. That gap remains.

This lack of understanding allows the emergence of dualistic interpretations. Neuroscience, however, continues to operate within an integrated view of mind and matter.

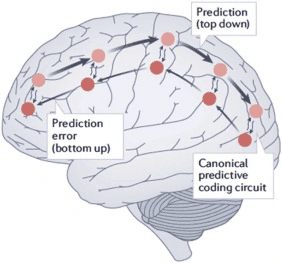

Predictive Coding: The Brain as a Prediction Machine

Predictive coding is one of the most influential frameworks for studying consciousness. The brain operates as a predictive system that continuously generates models of the world and updates them by minimizing prediction errors (Friston, 2010; Clark, 2013). If a traffic light suddenly turns blue instead of green, sensory systems send that unexpected signal upward, and higher-level systems update the internal model of how traffic lights behave. Within this framework, consciousness can be understood as the integration of internal and external signals into a coherent representation.

Fig. 5, Mudrik et al. (2025). Predictive Processing as hierarchical inference. CC BY 4.0.

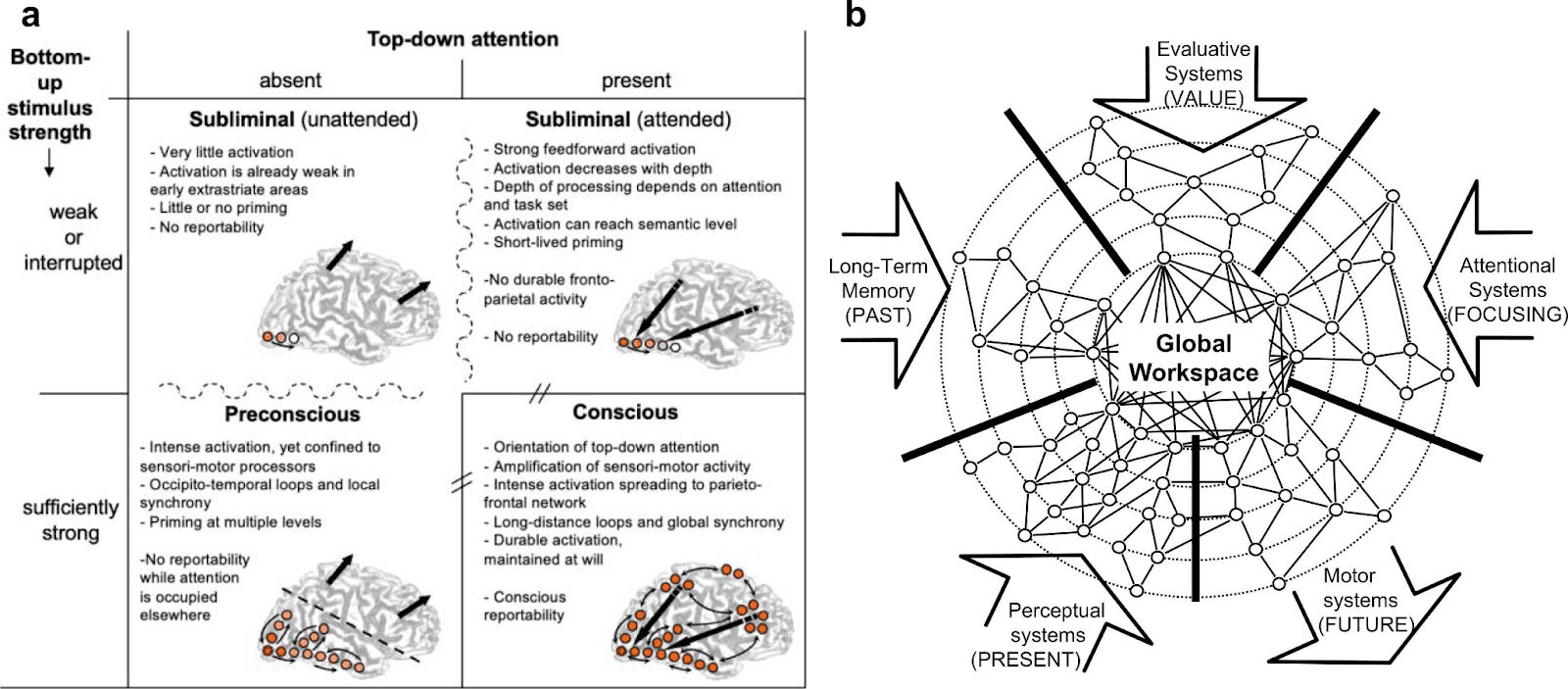

Global Workspace Theory: How Consciousness Emerges Through Information Broadcasting

Another influential proposal is Global Workspace Theory. Here, consciousness emerges when information becomes globally available across the system, allowing multiple processes to access and use it simultaneously (Baars, 1988; Dehaene & Changeux, 2011). Not all processing is conscious; only what reaches this global broadcasting level.

Fig. 1, Mudrik et al. (2025). Global Workspace model of conscious access, adapted from Dehaene et al. (2006). CC BY 4.0.

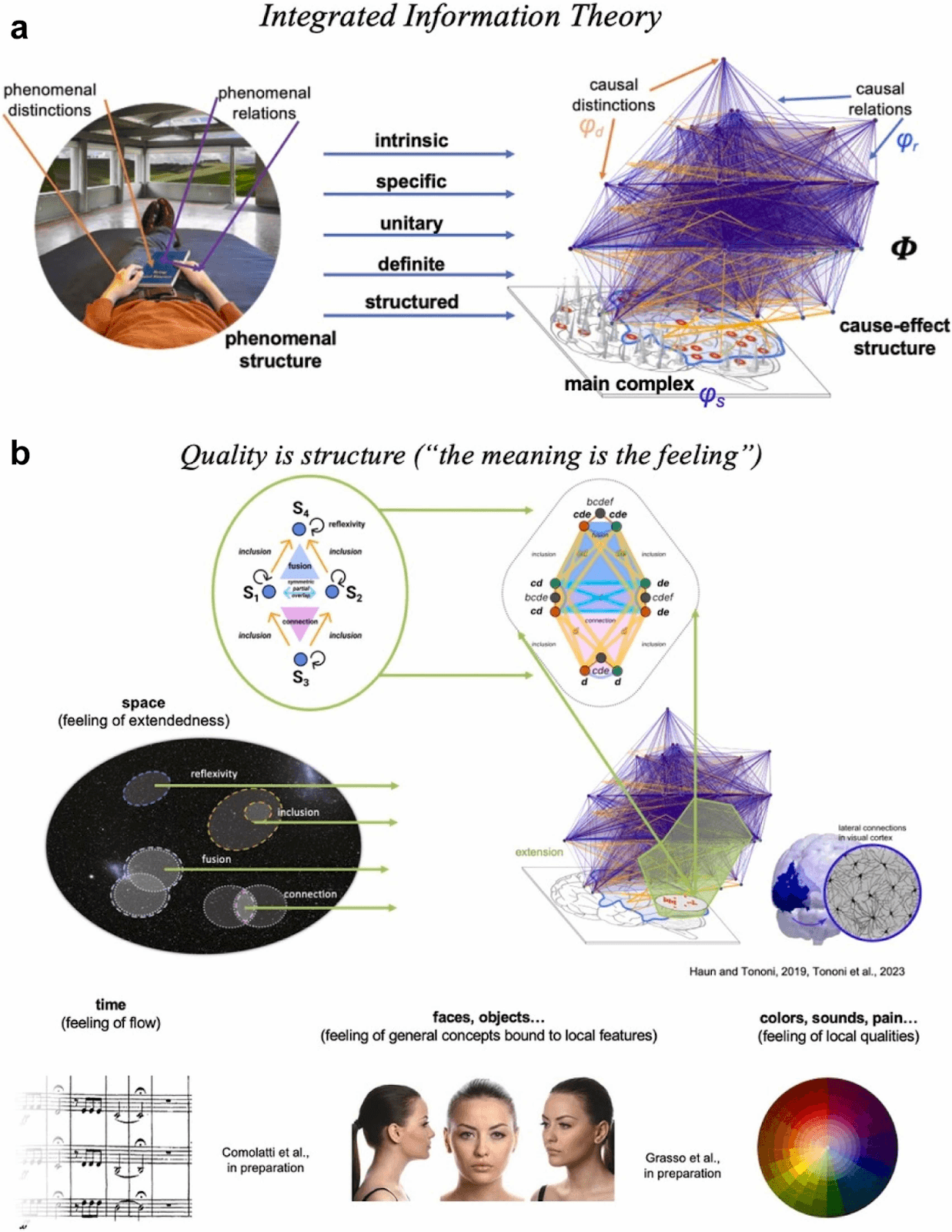

Integrated Information Theory (IIT): Measuring Consciousness

Integrated Information Theory, developed by Giulio Tononi, proposes that consciousness depends on how much a system integrates information in an irreducible way (Tononi, 2004; Tononi et al., 2016). The more integrated the system, the higher its level of consciousness.

Fig. 4, Mudrik et al. (2025). IIT maps phenomenal properties to physical cause-effect structures. CC BY 4.0.

Alongside these scientific theories, there are less empirically grounded proposals. Some equate consciousness with computational complexity, without specifying mechanisms. Others, such as panpsychism, suggest that all matter has some form of experience (Goff, 2019). These ideas broaden the debate but lack direct experimental validation.

Can We Compute Consciousness? Simulation vs. Experience

Does implementing the mechanisms described by these theories generate consciousness, or only simulate it?

This problem mirrors what we encounter in neuroscience when studying simple organisms. For example, Drosophila melanogaster has a relatively small nervous system, yet it can learn, remember, and make decisions (Brembs, 2013). Modeling its connectivity and dynamics allows us to predict its behavior in certain contexts. For a deeper look at how the fruit fly connectome is reshaping our understanding of neural architecture, see our analysis of the Drosophila brain connectome and its implications for AI.

However, predicting behavior does not imply reproducing internal experience. We can capture the rules of a system without capturing what it “feels like” from the inside, if such experience exists at all. This distinction remains one of the main conceptual limits in consciousness research (Seth, 2021). From a practical perspective, this may not always be critical, but we cannot assume that computing mechanisms recreates experience. This leads directly to the well-known idea of philosophical zombies.

MultiNeuraxon Architecture: What Brain-Inspired AI Actually Does

In this context, architectures like MultiNeuraxon do not aim to “create consciousness”, but to approximate mechanisms that some theories consider relevant.

The system introduces continuous-time dynamics, allowing internal states to evolve smoothly instead of resetting at each step. This resembles the notion of a continuous internal flow found in biological systems (Friston, 2010). To understand why continuous-time processing matters for intelligence, see NIA Volume 1: Why Intelligence Is Not Computed in Steps, but in Time.

It also incorporates multiple interaction timescales, fast, slow, and modulatory, similar to the combination of synaptic signaling and neuromodulation in the brain (Marder, 2012). These dynamics are formally described through equations that integrate synaptic and modulatory contributions into the system’s state evolution.

Finally, its organization into multiple functional spheres enables both differentiation and integration. This type of structure underlies both Global Workspace Theory and Integrated Information Theory, and forms part of the scientific proposal we have been developing for AGI Conference 2026.

What matters at this stage is that the system begins to capture properties associated, in humans, with conscious processes: global integration, temporal continuity, and internal regulation.

Why Consciousness Research Matters for Artificial General Intelligence

The development of artificial general intelligence does not depend solely on improving performance in isolated tasks. It depends on understanding how intelligence organizes itself when it operates flexibly, stably, and coherently.

Theories of consciousness point precisely to these mechanisms: integration, global access, internal models, and multiscale regulation. Even if we are far from recreating subjective experience, we can identify and compute properties that seem necessary for more general forms of intelligence.

Working in this direction allows the construction of more robust systems, capable of maintaining coherence over time and generalizing across contexts.

Within this framework, the advantage of systems like Aigarth does not lie in creating conscious machines, nor in imagining it as a “good Terminator”, but in understanding and controlling the mechanisms that organize advanced intelligence.

A system that integrates multiple scales, maintains dynamic stability, and evolves without losing coherence provides a much stronger foundation for exploring advanced forms of intelligence. For a comparison of how biological neural networks, classical artificial networks, and Neuraxon differ architecturally, see NIA Volume 4: Neural Networks in AI and Neuroscience.

If more complex properties or forms of self-reference emerge, they will not appear by accident, but as a consequence of structures that can already be described and analyzed formally.

And that transforms consciousness from a purely speculative problem into something that can be systematically investigated.

Scientific References

Baars, B. J. (1988). A cognitive theory of consciousness. Cambridge University Press. [Link]

Brembs, B. (2013). Structure and function of information processing in the fruit fly brain. Frontiers in Behavioral Neuroscience, 7, 1–17. [Link]

Clark, A. (2013). Whatever next? Predictive brains, situated agents, and the future of cognitive science. Behavioral and Brain Sciences, 36(3), 181–204. [Link]

Dehaene, S., & Changeux, J. P. (2011). Experimental and theoretical approaches to conscious processing. Neuron, 70(2), 200–227. [Link]

Dehaene, S., Kerszberg, M., & Changeux, J. P. (1998). A neuronal model of a global workspace in effortful cognitive tasks. PNAS, 95(24), 14529–14534. [Link]

Friston, K. (2010). The free-energy principle: A unified brain theory? Nature Reviews Neuroscience, 11(2), 127–138. [Link]

Goff, P. (2019). Galileo’s error: Foundations for a new science of consciousness. Pantheon. [Link]

Marder, E. (2012). Neuromodulation of neuronal circuits: Back to the future. Neuron, 76(1), 1–11. [Link]

Mudrik, L., Boly, M., Dehaene, S., Fleming, S.M., Lamme, V., Seth, A., & Melloni, L. (2025). Unpacking the complexities of consciousness: Theories and reflections. Neuroscience and Biobehavioral Reviews, 170, 106053. [Link]

Nagel, T. (1974). What is it like to be a bat? The Philosophical Review, 83(4), 435–450. [Link]

Seth, A. (2021). Being you: A new science of consciousness. Faber & Faber. [Link]

Seth, A. K., & Bayne, T. (2022). Theories of consciousness. Nature Reviews Neuroscience, 23(7), 439–452. [Link]

Sutton, R. S., & Barto, A. G. (2018). Reinforcement learning: An introduction (2nd ed.). MIT Press. [Link]

Tononi, G. (2004). An information integration theory of consciousness. BMC Neuroscience, 5(42). [Link]

Tononi, G., Boly, M., Massimini, M., & Koch, C. (2016). Integrated information theory: From consciousness to its physical substrate. Nature Reviews Neuroscience, 17(7), 450–461. [Link]

Explore the Full Neuraxon Intelligence Academy Series

NIA Volume 1: Why Intelligence Is Not Computed in Steps, but in Time. Explores why biological intelligence operates in continuous time rather than discrete computational steps like traditional LLMs.

NIA Volume 2: Ternary Dynamics as a Model of Living Intelligence. Explains ternary dynamics and why three-state logic (excitatory, neutral, inhibitory) matters for modeling living systems.

NIA Volume 3: Neuromodulation and Brain-Inspired AI. Covers neuromodulation and how the brain’s chemical signaling inspires Neuraxon’s architecture.

NIA Volume 4: Neural Networks in AI and Neuroscience. A deep comparison of biological neural networks, artificial neural networks, and Neuraxon’s third-path approach.

NIA Volume 5: Astrocytes and Brain-Inspired AI. How astrocytic gating transforms neural network plasticity through the AGMP framework in Neuraxon.