QUBIC BLOG POST

Fruit Fly Connectome, Brain Architecture, and Computation: From the Drosophila Connectome to QUBIC

Written by

Qubic Scientific Team

Published:

Mar 30, 2026

Listen to this blog post

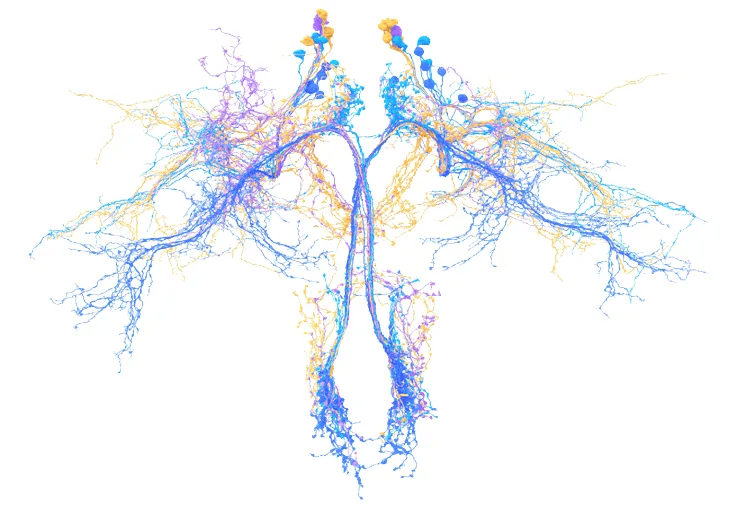

Credit: Amy Sterling, Murthy and Seung Labs, Princeton University

Imagine a building with thirty people. Knowing how many there are adds little. What really explains what is happening is who depends on whom, who is a son, father, wife, husband, who coordinates the building, who is the president of the community, who is the doorman, the delivery person, the owner or the tenant. The dynamics of the group are not in the number, but in the structure of relationships. It is the essence of the social brain that we are.

In the brain, the connectome is similar to the previous example: a complete description of that dynamic structure. The key is not the map, but understanding what kind of dynamics can emerge from it when it is activated. In the building, what happens when the son of a family moves to another city, when a couple separates and apartments become available, when the president changes, when new neighbors arrive. To understand this biologically, scientists map the connectome of organisms simpler than Homo sapiens. In this recent paper, they analyze the connectome of the fruit fly: Drosophila melanogaster.

The underlying idea is profound: in biological systems, part of intelligence is not learned; it is already contained in the architecture. This concept, known as strong architectural priors, challenges the prevailing paradigm of AI that relies solely on learning from data.

The Complete Fruit Fly Brain Connectome: A Landmark in Neural Circuit Mapping

The complete connectome of the fly brain, more than 125,000 neurons and around 50 million synapses, is not only a technical achievement, but a new computational unit of analysis (Shiu et al., 2024). For the first time, we can study a complete nervous system as an almost closed functional graph. The FlyWire project, a Princeton-led consortium of over 200 researchers across 127 institutions, made this whole-brain connectome possible through a combination of AI-assisted segmentation, citizen science, and expert proofreading.

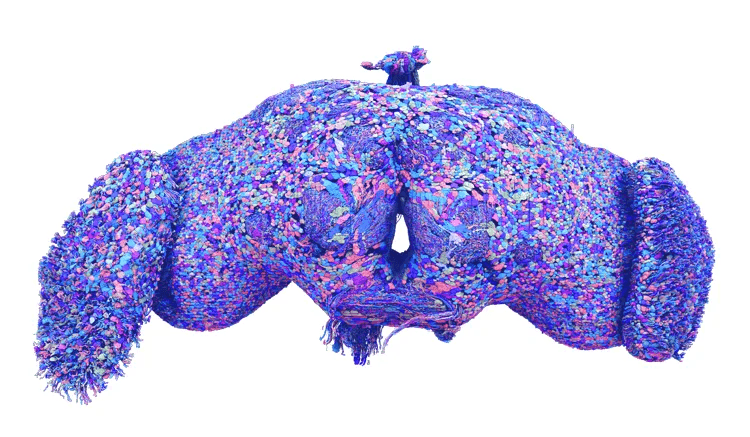

Credit: Tyler Sloan for FlyWire and Amy Sterling, Murthy and Seung Labs, Princeton University

Spiking Neural Network Model: How Connectivity Drives Sensorimotor Computation

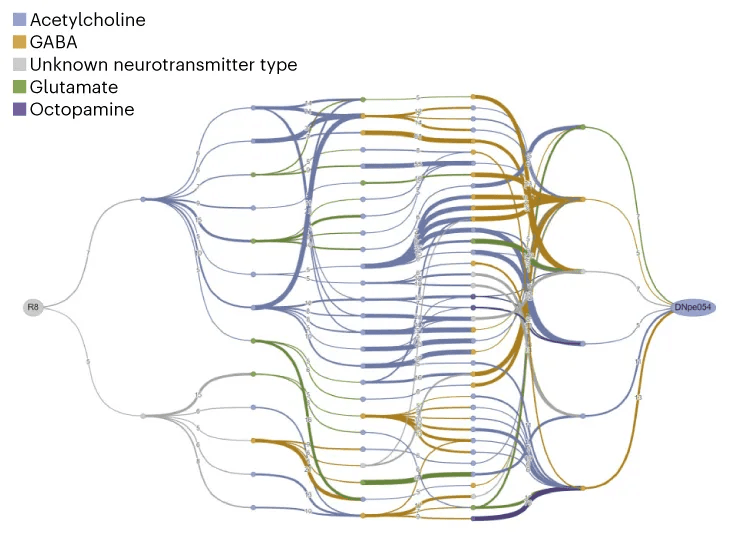

On top of that graph, the authors build a very simple model. They construct a network of neurons (leaky integrate-and-fire type) where activity propagates according to synaptic connectivity and the type of neurotransmitter (Gerstner et al., 2014; Shiu et al., 2024). No training is needed. The spiking neural network does not “learn” in the classical sense, but executes what its structure allows. Similar to the building example, where the functions and connections between members of the community guide and preconfigure their behaviors.

Credit: Amy Sterling, Murthy and Seung Labs, Princeton University

The model created by the researchers is capable of predicting complete sensorimotor transformations. If they activate gustatory neurons, it allows them to anticipate which motor neurons will be activated, and these predictions are experimentally validated using a technique known as optogenetics (Shiu et al., 2024). That is, function emerges directly from architecture. That is, by manipulating how the fly collects and constructs stimuli related to taste, they can know how it will react. Connectivity is not only a support; it is also computation (Bargmann & Marder, 2013).

Architectural Priors: Intelligence Encoded Before Learning Begins

In biology, brains do not start empty. An organism is born with organized circuits that allow functional behaviors from the beginning. In simple systems such as C. elegans or other insects, much of the functional dynamics is directly conditioned by connectivity (Winding et al., 2023; Scheffer & Meinertzhagen, 2021). When a complete connectome is reconstructed, recurrent patterns appear. These are feedback loops, competitive inhibitory circuits, highly directed sensorimotor pathways. These patterns are not due to real-time learning, but to evolutionary processes that have, so to speak, “encoded” solutions into their own structure.

In deep learning, however, networks start with arbitrarily initialized parameters and intelligence, or rather its appearance, emerges through optimization with large volumes of data (LeCun, Bengio, & Hinton, 2015). Architecture introduces biases, but through training they are gradually smoothed out to some extent, purely through computational scalability.

The fruit fly connectome suggests another possibility: part of intelligence may reside in the structure even before learning. This opens an alternative paradigm for brain-inspired artificial intelligence, since architectures that already contain useful computational properties enhance the role of learning. This approach has been formulated as the use of strong architectural priors or connectome-based approaches (Zador, 2019).

Energy Efficiency in Neural Computation: Why Brain Architecture Matters

There is also a physical argument that reinforces this idea: efficiency. The brain of a fly performs complex tasks with very low energy consumption. This suggests that efficiency does not depend on the number of parameters, but on how neural circuits are organized (Laughlin & Sejnowski, 2003). Connectomes allow us to study precisely that organization explicitly. This principle is at the heart of the growing field of neuromorphic computing, which seeks to build hardware and algorithms that mirror the brain’s remarkable energy efficiency.

Limitations of the Drosophila Connectome: Why a Brain Wiring Diagram Is Not Enough

The paper has gained some recent visibility, but it is important to ground it properly.

The connectome of the fly does not allow complete prediction of behavior. It allows fairly accurate prediction of some local sensorimotor transformations, such as which neurons are activated or which nodes are necessary for a response, but it does not constitute a complete theory of behavior. The work itself recognizes clear limitations, since the model does not adequately incorporate neuromodulation, internal states, extrasynaptic signaling or sustained basal activity, and is based on highly simplified assumptions such as a null basal firing rate, that is, without spontaneous activity, very different from real biological behavior where the brain is active at all times (Shiu et al., 2024). Here the connectome rather describes a structure of possibilities, but not the complete dynamics of the system. The same network can produce different behaviors depending on the internal state, prior history or context. This idea is well established: connectivity constrains dynamics, but does not completely determine it (Marder & Bucher, 2007; Bargmann, 2012). In your residential community, relationships mark a high probability of functions and behaviors, but do not fix them. If an unexpected event occurs, such as a party, a meeting, or a power outage, people will act according to the context, not only based on their structural connectome. The paper has emphasized that “a connectome is not enough” to understand a brain (Scheffer & Meinertzhagen, 2021).

The Human Brain: Beyond Structural Connectivity

This limitation becomes even clearer if we consider the human case. Even if we had a complete human connectome, something that does not exist today and whose availability is uncertain, it would not be sufficient to fully understand behavior. It would serve to delimit structural constraints, understand organizational principles and improve dynamic models, but human behavior also depends on development, plasticity, the body, endocrinology, language, culture and social context.

Current studies that attempt to predict behavior from brain connectivity show clear limitations, where effect sizes are modest and strongly dependent on sample size (Marek et al., 2022). Therefore, the idea that a human connectome would allow us to completely “read” behavior would be an overinterpretation.

From Connectome to Neuraxon: QUBIC’s Brain-Inspired AI Approach

In Neuraxon, we know that architecture contains computation, that it supports emergent intelligence and induces probable behaviors. But we also know that it is not sufficient, which is why we add rich internal dynamics, neuromodulation and state. Neuraxon aims to position itself in that space. It introduces endogenous activity, neuromodulators, multiple temporal scales and plasticity, trying to simulate several functions of the human brain, not only structural ones. As explored in our deep dive on neural networks in AI and neuroscience, the gap between biological and artificial neural networks is precisely what Neuraxon bridges.

Aigarth takes this approach one step further. The connectome of the fly is a closed system. Aigarth proposes systems where structure can evolve, dynamics are continuous and function emerges without explicit training. Here, intelligence is not only the result of optimization, but a property of organized dynamical systems (Friston, 2010).

From Optimization to Organization: The Future of Artificial Intelligence

Overall, the connectome of Drosophila does not solve the problem of behavior, but it shows us the importance of the starting point and the initial structure. It shows us that a significant part of intelligence lies in architecture. But between architecture and behavior there are still dynamics, state, history and context.

We must move from optimization (LLMs) to organization (Aigarth). We strongly believe this is one of the most relevant shifts in the future of artificial intelligence. Even a fly helps us defend these ideas.

Explore the Full Neuraxon Intelligence Academy

The fruit fly proved that intelligence begins with architecture. Neuraxon is building on that principle. Explore how brain-inspired AI is taking shape on QUBIC, start with the Neuraxon Intelligence Academy.

NIA Volume 1: Why Intelligence Is Not Computed in Steps, but in Time — Explores why biological intelligence operates in continuous time rather than discrete computational steps like traditional LLMs.

NIA Volume 2: Ternary Dynamics as a Model of Living Intelligence — Explains ternary dynamics and why three-state logic (excitatory, neutral, inhibitory) matters for modeling living systems.

NIA Volume 3: Neuromodulation and Brain-Inspired AI — Covers neuromodulation and how the brain's chemical signaling (dopamine, serotonin, acetylcholine, norepinephrine) inspires Neuraxon's architecture.

NIA Volume 4: Neural Networks in AI and Neuroscience — A deep comparison of biological neural networks, artificial neural networks, and Neuraxon's third-path approach.

NIA Volume 5: Astrocytes and Brain-Inspired AI — Explores how astrocytes regulate synaptic plasticity through the tripartite synapse, and how Neuraxon incorporates astrocytic gating to address the stability-plasticity dilemma, enabling the network to locally control when, where, and how much learning occurs.

Qubic is a decentralized, open-source network for experimental technology. To learn more, visit qubic.org. Join the discussion on X, Discord, and Telegram.