QUBIC BLOG POST

Brain Criticality and the Branching Ratio in Neural and Artificial Networks: A Bioinspired Principle in Neuraxon

Written by

Qubic Scientific Team

Published:

May 13, 2026

Listen to this blog post

Branching ratio and criticality in biological networks, in artificial networks, and as a bioinspired principle in Neuraxon

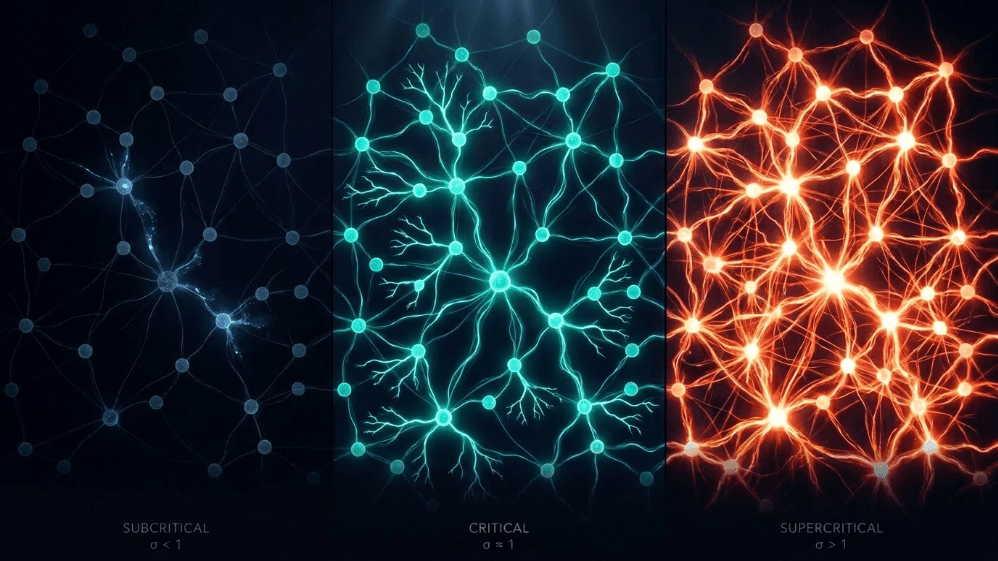

Fig. 1. Three regimes of neural network dynamics defined by the branching ratio (σ).

What do a snow avalanche, a forest fire, an earthquake, and the spontaneous activity of the cerebral cortex have in common?

They all share a frontier between order and chaos, what is called a critical state. In the brain, that edge is measured by a simple parameter: the branching ratio (σ or m). It would be something like the average ratio of neuronal "offspring" that each "parent" neuron activates. When σ ≈ 1, activity neither dies out nor explodes; it reverberates.

Beggs and Plenz (2003) recorded the spontaneous activity of the cerebral cortex in rats and found that the activity formed cascade-like patterns, the so-called neuronal avalanches, with a branching ratio close to 1. The brain seemed to live at a critical point. In humans, the branching ratio σ once again appears close to unity (Wang et al., 2025; Plenz et al., 2021; Wilting & Priesemann, 2019).

At the critical point, systems simultaneously exhibit maximal sensitivity to perturbations (responsiveness), maximal dynamic capacity (number of accessible states), maximal information transmission, and maximal complexity (Timme et al., 2016; Shew et al., 2009, 2011).

What Is the Branching Ratio and How Is It Measured?

Conceptually, the branching ratio is trivial: if at instant t there are A(t) active neurons and at t+1 there are A(t+1), then:

σ = ⟨ A(t+1) / A(t) ⟩

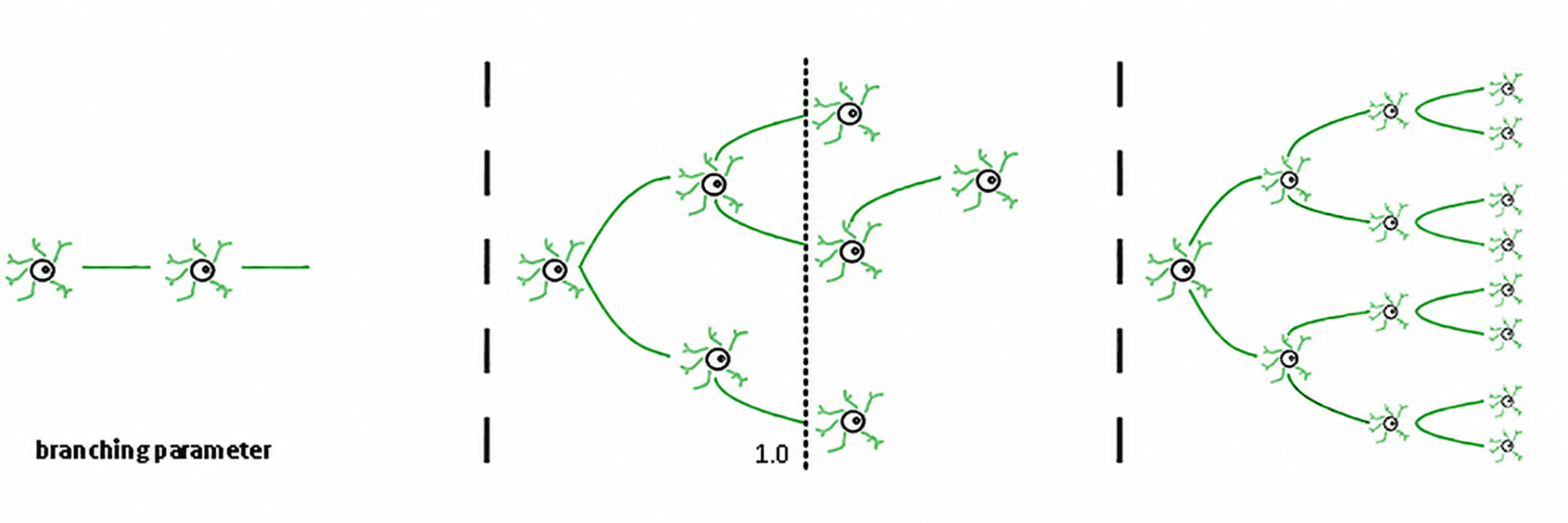

Fig. 2. Branching parameter regimes in neural networks. A branching parameter of 1 (center) allows balanced one-to-one transmission of neural signals. Values less than 1 (left) lead to subcritical decay; values greater than 1 (right) lead to supercritical runaway excitation. Adapted from Beggs & Timme (2012), reproduced from Zimmern (2020), Frontiers in Neural Circuits. CC BY 4.0.

Three regimes follow from this (de Carvalho & Prado, 2000; Haldeman & Beggs, 2005):

Subcritical (σ < 1): activity decays; the system "forgets" the perturbation quickly. It is stable but poor in memory and not very expressive.

Supercritical (σ > 1): activity explodes into cascades. This is the signature of pathological regimes such as epileptic seizures (Hsu et al., 2008; Hagemann et al., 2021).

Critical (σ ≈ 1): each spike, on average, generates another spike. Activity reverberates, neuronal avalanches obey power laws, and the system maintains a structured memory of the input.

The beauty of σ is that it is a single number that summarizes the global dynamical regime. But measuring it is less trivial. When applied to in vivo cortical recordings, the measurement reveals that the cortex does not operate exactly at σ = 1, but slightly below, in a regime that the authors call reverberating (Wilting et al., 2018). The difference is important: being exactly at σ = 1 would be like pedaling a bicycle balanced on a tightrope; being slightly below allows for rapid adjustment to task demands without the risk of runaway explosion.

Criticality in Artificial Neural Networks: From the Edge of Chaos to Reservoir Computing

Bertschinger and Natschläger (2004) showed that random recurrent threshold networks reach their maximal computational capacity on temporal processing tasks precisely at the order–chaos transition.

Boedecker et al. (2012) extended the analysis to echo state networks within the reservoir computing paradigm, confirming that information transfer capacity and active memory are maximized at the edge of chaos.

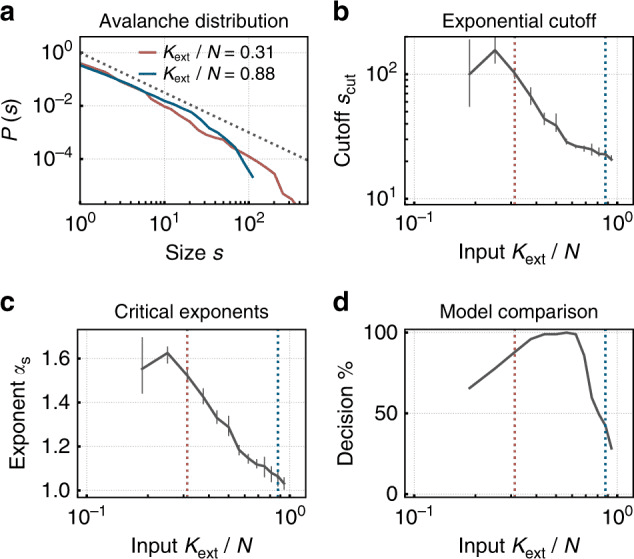

Fig. 3. A spiking neuromorphic network with synaptic plasticity self-organizes toward criticality under low external input, exhibiting power-law avalanche size distributions — the hallmark of the critical state in both biological and artificial neural networks. Under higher input, the network shifts to a subcritical regime with truncated distributions. Reproduced from Cramer et al. (2020), Nature Communications, 11, 2853. CC BY 4.0.

In the language of artificial neural networks, the measurement parameter is called the spectral radius. When it exceeds 1, trajectories diverge exponentially (chaos); when it is well below 1, the network collapses to the fixed point and loses memory. The spectral radius close to 1 is, in this context, the formal equivalent of the biological σ ≈ 1 (Magnasco, 2022; Morales et al., 2023). In spiking neural networks, the branching ratio can be measured with methods almost identical to those used in neuronal cultures (Cramer et al., 2020; Zeraati et al., 2024).

Why Does Brain Criticality Maximize Neural Computation?

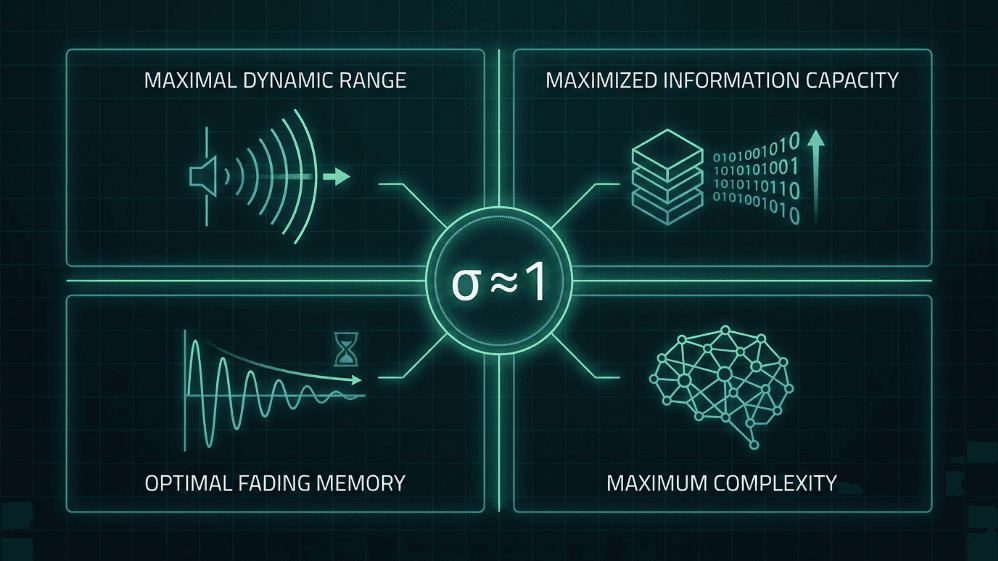

Operating close to σ ≈ 1 provides four advantages that are central to both the critical brain hypothesis and the design of brain-inspired AI systems:

Maximal dynamic range. Shew et al. (2009) showed that the range of input intensities the cortex can discriminate is maximal when the excitation–inhibition balance places the network at criticality.

Maximized information capacity. The entropy of avalanche patterns and the mutual information between input and output peak at σ ≈ 1 (Shew et al., 2011).

Optimal fading memory. In the critical regime, the perturbation is sustained just long enough to influence processing without contaminating the distant future; it is the sweet spot between stability and temporal integration (Boedecker et al., 2012).

Complexity as a unifying measure. Timme et al. (2016) demonstrated that neural complexity is maximized exactly at the critical point, linking criticality with formal theories of consciousness and processing.

Fig. 4. Four computational advantages of operating near the critical branching ratio (σ ≈ 1). At criticality, neural networks achieve maximal dynamic range, maximized information capacity, optimal fading memory, and maximum complexity — properties that are central to both the critical brain hypothesis and brain-inspired AI design.

The Brain Does Not Always Operate at σ = 1

This does not imply that the brain always operates at σ = 1. Evidence rather suggests a slightly subcritical and modulable regime: during demanding tasks the network approaches criticality, during deep sleep it moves away, and pathological states (epilepsy, deep anesthesia, certain psychiatric conditions) are associated with measurable deviations from this operational range (Meisel et al., 2017; Zimmern, 2020). The branching ratio is becoming a dynamic biomarker of the functional state of the nervous system.

Why We Use the Branching Ratio in Neuraxon: Bioinspired AI Design at the Edge of Chaos

Neuraxon is a bioinspired system that adopts dynamical principles of the cortex as design constraints. The branching ratio is one of the most important, and we use it for four reasons:

As a Real-Time Operational Invariant for Neural Network Stability

In deep spiking or recurrent architectures, the dual risk of activity collapse (silent network, vanishing gradients) and runaway explosion (saturation, exploding gradients) is structural. Monitoring σ in real time gives us a single diagnostic scalar, independent of the concrete architecture, that indicates whether the system is alive in the computational sense.

As a Bioinspired Self-Regulation Target Through Self-Organized Criticality

The network self-organizes toward criticality without the need for centralized fine-tuning, replicating the principle of self-organized criticality (Bornholdt & Röhl, 2003; Levina et al., 2007). This drastically reduces sensitivity to hyperparameters and endows the system with robustness against distribution shifts. As we explored in NIA Volume 7 on artificial life and digital ecosystems, this is exactly how emergent complexity arises from local rules without centralized control.

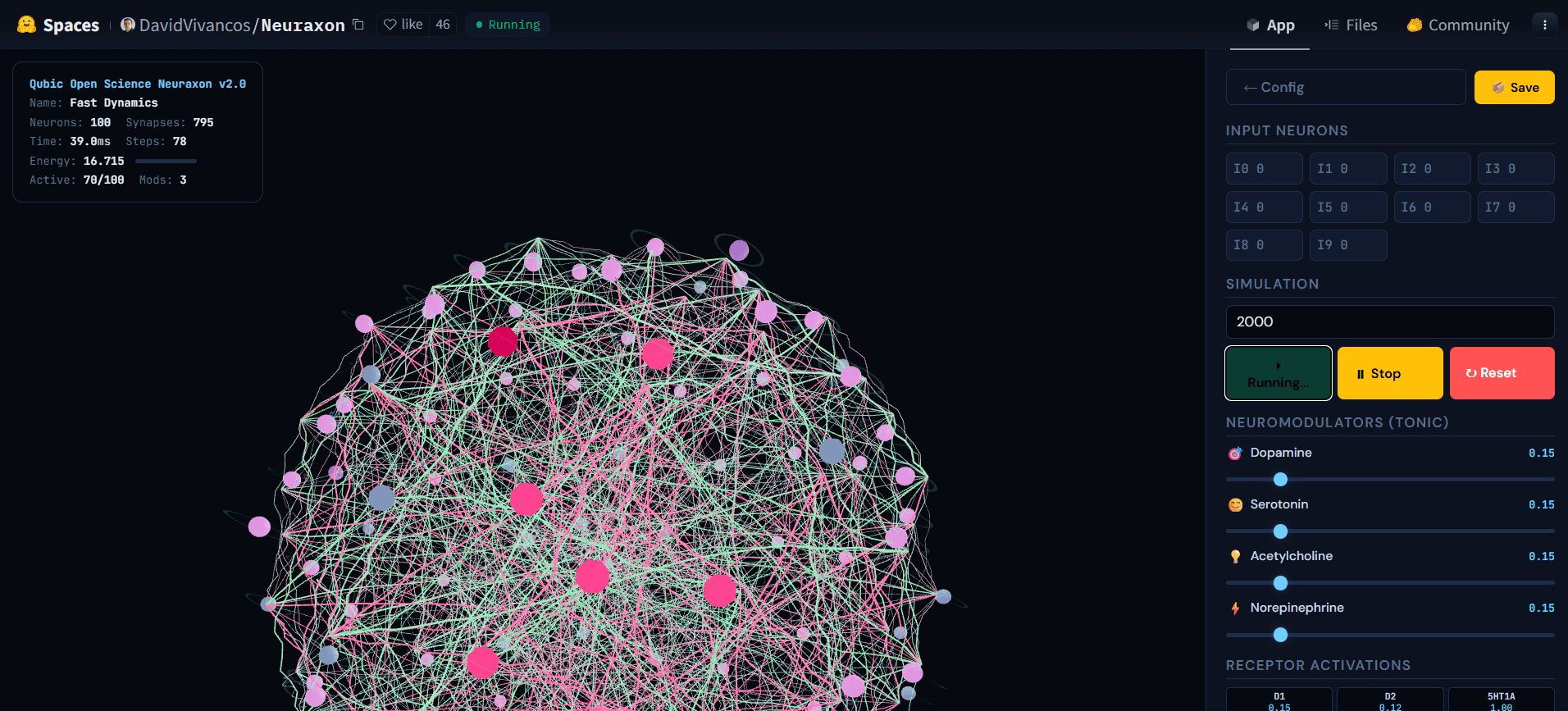

Fig. 5. Neuraxon 3D network during active simulation, showing cascading activity across ternary-state neurons. Brightly active nodes (pink) propagate signals through excitatory (green) and inhibitory (pink) connections while other neurons remain at rest (gray), illustrating a reverberating regime near the critical branching ratio (σ ≈ 1). This balanced state — neither silent nor explosive — is what Neuraxon self-organizes toward using bioinspired criticality principles. Explore the interactive demo athuggingface.co/spaces/DavidVivancos/Neuraxon. Source: Qubic Scientific Team.

As a Bridge Between Neuroscientific Observation and AI Design

The branching ratio is one of the very few magnitudes that is measured with the same formalism in electrophysiology, fMRI, and artificial networks. This allows for testing bidirectional hypotheses: if an intervention improves biological criticality, we can ask whether the same intervention — translated into the artificial architecture — improves the model's computation, and vice versa. This principle is central to the neuromodulation framework and the astrocytic gating mechanisms we have developed in previous volumes of this academy.

As a Functional, Not Aesthetic, Criterion for Brain-Inspired AI

Criticality is an operational constraint with empirical consequences. Operating near the reverberating regime improves — as measured in our internal evaluations and submitted publications — generalization capacity, stability under input perturbations, representational richness, and the temporal coherence of reasoning. These effects qualitatively match those reported in both the biological (Cocchi et al., 2017) and artificial (Cramer et al., 2020; Morales et al., 2023) literature.

The Branching Ratio: From Statistical Physics to Brain-Inspired AI Architecture

The branching ratio is one of those conceptual rara avis: simple enough to reduce to a single formula, deep enough to bridge statistical physics, neuroscience, AI, and systems design. For the biological brain, σ ≈ 1 seems to be the regime where the virtuous combination of sensitivity, memory, expressiveness, and robustness emerges. For artificial networks, the same frontier — rebranded as the edge of chaos — predicts maximal computational capacity.

And for Neuraxon, it is a guiding principle of bioinspired design: an auditable, self-regulating, and biologically meaningful metric that helps us keep the system alive, in the richest sense of the word.

References

Beggs, J. M., & Plenz, D. (2003). Neuronal avalanches in neocortical circuits. The Journal of Neuroscience, 23(35), 11167–11177. https://doi.org/10.1523/JNEUROSCI.23-35-11167.2003

Bertschinger, N., & Natschläger, T. (2004). Real-time computation at the edge of chaos in recurrent neural networks. Neural Computation, 16(7), 1413–1436. https://doi.org/10.1162/089976604323057443

Boedecker, J., Obst, O., Lizier, J. T., Mayer, N. M., & Asada, M. (2012). Information processing in echo state networks at the edge of chaos. Theory in Biosciences, 131(3), 205–213. https://doi.org/10.1007/s12064-011-0146-8

Bornholdt, S., & Röhl, T. (2003). Self-organized critical neural networks. Physical Review E, 67(6), 066118. https://doi.org/10.1103/PhysRevE.67.066118

Cocchi, L., Gollo, L. L., Zalesky, A., & Breakspear, M. (2017). Criticality in the brain: A synthesis of neurobiology, models and cognition. Progress in Neurobiology, 158, 132–152. https://doi.org/10.1016/j.pneurobio.2017.07.002

Cramer, B., Stöckel, D., Kreft, M., Wibral, M., Schemmel, J., Meier, K., & Priesemann, V. (2020). Control of criticality and computation in spiking neuromorphic networks with plasticity. Nature Communications, 11, 2853. https://doi.org/10.1038/s41467-020-16548-3

de Carvalho, J. X., & Prado, C. P. C. (2000). Self-organized criticality in the Olami-Feder-Christensen model. Physical Review Letters, 84(17), 4006–4009. https://doi.org/10.1103/PhysRevLett.84.4006

Derrida, B., & Pomeau, Y. (1986). Random networks of automata: A simple annealed approximation. Europhysics Letters, 1(2), 45–49. https://doi.org/10.1209/0295-5075/1/2/001

Hagemann, A., Wilting, J., Samimizad, B., Mormann, F., & Priesemann, V. (2021). Assessing criticality in pre-seizure single-neuron activity of human epileptic cortex. PLOS Computational Biology, 17(3), e1008773. https://doi.org/10.1371/journal.pcbi.1008773

Haldeman, C., & Beggs, J. M. (2005). Critical branching captures activity in living neural networks and maximizes the number of metastable states. Physical Review Letters, 94(5), 058101. https://doi.org/10.1103/PhysRevLett.94.058101

Hsu, D., Chen, W., Hsu, M., & Beggs, J. M. (2008). An open hypothesis: Is epilepsy learned, and can it be unlearned? Epilepsy & Behavior, 13(3), 511–522. https://doi.org/10.1016/j.yebeh.2008.05.007

Langton, C. G. (1990). Computation at the edge of chaos: Phase transitions and emergent computation. Physica D: Nonlinear Phenomena, 42(1–3), 12–37. https://doi.org/10.1016/0167-2789(90)90064-V

Levina, A., Herrmann, J. M., & Geisel, T. (2007). Dynamical synapses causing self-organized criticality in neural networks. Nature Physics, 3(12), 857–860. https://doi.org/10.1038/nphys758

Magnasco, M. O. (2022). Robustness and flexibility of neural function through dynamical criticality. Entropy, 24(5), 591. https://doi.org/10.3390/e24050591

Meisel, C., Klaus, A., Vyazovskiy, V. V., & Plenz, D. (2017). The interplay between long- and short-range temporal correlations shapes cortex dynamics across vigilance states. The Journal of Neuroscience, 37(42), 10114–10124. https://doi.org/10.1523/JNEUROSCI.0448-17.2017

Morales, G. B., di Santo, S., & Muñoz, M. A. (2023). Unveiling the intrinsic dynamics of biological and artificial neural networks: From criticality to optimal representations. Frontiers in Complex Systems, 1, 1276338. https://doi.org/10.3389/fcpxs.2023.1276338

Plenz, D., Ribeiro, T. L., Miller, S. R., Kells, P. A., Vakili, A., & Capek, E. L. (2021). Self-organized criticality in the brain. Frontiers in Physics, 9, 639389. https://doi.org/10.3389/fphy.2021.639389

Shew, W. L., Yang, H., Petermann, T., Roy, R., & Plenz, D. (2009). Neuronal avalanches imply maximum dynamic range in cortical networks at criticality. The Journal of Neuroscience, 29(49), 15595–15600. https://doi.org/10.1523/JNEUROSCI.3864-09.2009

Shew, W. L., Yang, H., Yu, S., Roy, R., & Plenz, D. (2011). Information capacity and transmission are maximized in balanced cortical networks with neuronal avalanches. The Journal of Neuroscience, 31(1), 55–63. https://doi.org/10.1523/JNEUROSCI.4637-10.2011

Spitzner, F. P., Dehning, J., Wilting, J., Hagemann, A., Neto, J. P., Zierenberg, J., & Priesemann, V. (2021). MR. Estimator, a toolbox to determine intrinsic timescales from subsampled spiking activity. PLOS ONE, 16(4), e0249447. https://doi.org/10.1371/journal.pone.0249447

Timme, N. M., Marshall, N. J., Bennett, N., Ripp, M., Lautzenhiser, E., & Beggs, J. M. (2016). Criticality maximizes complexity in neural tissue. Frontiers in Physiology, 7, 425. https://doi.org/10.3389/fphys.2016.00425

Turrigiano, G. G. (2008). The self-tuning neuron: Synaptic scaling of excitatory synapses. Cell, 135(3), 422–435. https://doi.org/10.1016/j.cell.2008.10.008

Wang, J., Cao, R., Brunton, B. W., Smith, R. E. W., Buckner, R. L., & Liu, T. T. (2025). Genetic contributions to brain criticality and its relationship with human cognitive functions. Proceedings of the National Academy of Sciences, 122(26), e2417010122. https://doi.org/10.1073/pnas.2417010122

Wilting, J., Dehning, J., Pinheiro Neto, J., Rudelt, L., Wibral, M., Zierenberg, J., & Priesemann, V. (2018). Operating in a reverberating regime enables rapid tuning of network states to task requirements. Frontiers in Systems Neuroscience, 12, 55. https://doi.org/10.3389/fnsys.2018.00055

Wilting, J., & Priesemann, V. (2018). Inferring collective dynamical states from widely unobserved systems. Nature Communications, 9, 2325. https://doi.org/10.1038/s41467-018-04725-4

Wilting, J., & Priesemann, V. (2019). 25 years of criticality in neuroscience — Established results, open controversies, novel concepts. Current Opinion in Neurobiology, 58, 105–111. https://doi.org/10.1016/j.conb.2019.08.002

Yu, C. (2022). Toward a unified analysis of the brain criticality hypothesis: Reviewing several available tools. Frontiers in Neural Circuits, 16, 911245. https://doi.org/10.3389/fncir.2022.911245

Zeraati, R., Engel, T. A., & Levina, A. (2024). Estimating intrinsic timescales and criticality from neural recordings: Methods and pitfalls. Current Opinion in Neurobiology, 86, 102871. https://doi.org/10.1016/j.conb.2024.102871

Zimmern, V. (2020). Why brain criticality is clinically relevant: A scoping review. Frontiers in Neural Circuits, 14, 54. https://doi.org/10.3389/fncir.2020.00054

Explore the Complete Neuraxon Intelligence Academy

This is Volume 8 of the Neuraxon Intelligence Academy by the Qubic Scientific Team. If you are just joining us, explore the complete series to build a full understanding of the science behind Neuraxon, Aigarth, and Qubic's approach to brain-inspired, decentralized artificial intelligence:

NIA Vol. 1: Why Intelligence Is Not Computed in Steps, but in Time — Explores why biological intelligence operates in continuous time rather than discrete computational steps like traditional LLMs.

NIA Vol. 2: Ternary Dynamics as a Model of Living Intelligence — Explains ternary dynamics and why three-state logic (excitatory, neutral, inhibitory) matters for modeling living systems.

NIA Vol. 3: Neuromodulation and Brain-Inspired AI — Covers neuromodulation and how the brain's chemical signaling (dopamine, serotonin, acetylcholine, norepinephrine) inspires Neuraxon's architecture.

NIA Vol. 4: Neural Networks in AI and Neuroscience — A deep comparison of biological neural networks, artificial neural networks, and Neuraxon's third-path approach.

NIA Vol. 5: Astrocytes and Brain-Inspired AI — How astrocytic gating transforms neural network plasticity through the AGMP framework in Neuraxon.

NIA Vol. 6: Conscious Machines vs Intelligent Organisms: AI Consciousness Explained — Explores AI consciousness through the lens of Global Workspace Theory, Integrated Information Theory, and predictive coding.

NIA Vol. 7: Conway's Game of Life, Artificial Life, and Digital Ecosystems — The science behind Qubic, Aigarth, and Neuraxon's approach to emergent complexity and self-organized criticality in decentralized AI.

Qubic is a decentralized, open-source network for experimental technology. To learn more, visit qubic.org. Join the discussion on X, Discord, and Telegram.