QUBIC BLOG POST

Why and When We Need Superintelligence: A Commentary on Nick Bostrom’s 2026 Paper

Written by

Qubic Scientific Team

Published:

Mar 10, 2026

Listen to this blog post

A commentary on Nick Bostrom’s latest paper by Qubic Scientific Team

Nick Bostrom is a Swedish philosopher at the University of Oxford and one of the most influential voices in the debate on existential risks and artificial superintelligence. His landmark book Superintelligence: Paths, Dangers, Strategies (2014) systematically laid out for the first time the strategic risks of developing an intelligence superior to our own.

He has just published a new working paper, Optimal Timing for Superintelligence: Mundane Considerations for Existing People (2026), in which he shifts the central question. Rather than asking whether we should develop superintelligence, Bostrom focuses on when it is optimal to do so. For anyone following the rapidly evolving intersection of AI and blockchain, his framework carries profound implications for how we design the infrastructure that will underpin artificial general intelligence (AGI).

Reframing the Superintelligence Debate: Surgery, Not Roulette

The starting point of Bostrom’s paper is both elegant and disruptive. He reframes the polarized “AI yes vs. AI no” debate entirely. Developing superintelligence, he argues, is not like playing Russian roulette. It is more like undergoing a risky surgery for a condition that is already fatal.

What is that condition? The current state of humanity itself. Consider the baseline: approximately 170,000 deaths occur each day from aging, disease, and systemic failures. An aging global population faces irreversible biological deterioration. Incurable diseases, including oncological, neurodegenerative, and cardiovascular conditions, continue to burden millions. We confront unmitigated global risks, from climate instability, to systemic institutional corruption, to the erosion of democratic quality. Pandemics, wars, and the collapse of entire systems remain ever-present threats.

Given these realities, Bostrom argues that framing the choice as “zero risk without AI” versus “extreme risk with a superintelligence” is simplistic. The more rigorous question is: Which trajectory generates greater expected life expectancy and greater quality of life for people who already exist?

By anchoring his analysis in the real, present conditions of human life, Bostrom sidesteps philosophical abstractions and theological speculation. He is talking about you, your family, and the people alive right now.

Life Expectancy, Mortality Risk, and the Case for Artificial General Intelligence

When we are young, the annual risk of dying is extremely low. Biologically, we are far from death in most cases. But as we age, the probability of dying climbs relentlessly due to biological deterioration.

If superintelligence could radically reduce or even eliminate aging, as Bostrom proposes, your annual mortality risk would stay at the level of a healthy young person. Your mortality would stop increasing over time. In that scenario, life expectancy becomes extraordinarily long.

From this vantage point, the expected value of superintelligence compensates for its high risks. But what happens if we delay until the technology becomes perfectly “safe”? What if we accumulate the probability of dying with each passing year? The question becomes: is it more rational to accept the probability of catastrophe from early deployment, given that AI safety progress is exponential, or to accept the certainty of accumulated deaths from delay?

Temporal Discounting and the Cost of Waiting

Bostrom introduces the concept of temporal discounting (ρ), a well-studied principle in decision theory. Humans systematically value present outcomes more than future ones. This is why we stay in unsatisfying jobs, relationships, and patterns: the effort of change feels large, and the reward feels distant.

But here an interesting inversion occurs. If life after AGI is not merely longer but dramatically better, with radical improvements in health, cognitive capacity, and quality of life, then temporal discounting actually punishes waiting. Every year of delay is a year spent in a qualitatively worse condition when a far superior state is accessible.

Quality of Life and Risk Aversion in AGI Deployment

Bostrom’s model does not assume longevity alone. It incorporates substantial improvements in well-being. If quality of life doubles after the transition to superintelligence, the balance shifts decisively toward earlier deployment. He then layers in risk aversion metrics (CRRA and CARA), acknowledging that if we are more sensitive to extreme losses, the window where “launch now” remains advisable narrows and optimal delays lengthen.

This is not reckless accelerationism. It is calibrated decision-making under uncertainty, the kind of analysis that should inform how we govern the path to artificial general intelligence.

Two-Phase Deployment: Swift to Harbor, Slow to Berth

One of the paper’s strongest contributions is its division of the AGI transition into two distinct phases:

Phase 1: Reaching AGI capability. Move as quickly as is responsible toward building a system that demonstrates general intelligence.

Phase 2: A strategic pause before full deployment. Once the system exists, introduce a controlled delay to study it, test it under real conditions, and solve technical safety problems that were previously only theoretical.

Bostrom’s hypothesis is that once an AGI system actually exists, a “safety windfall” occurs. Researchers can observe real behavior rather than speculate about it. Safety progress accelerates dramatically because the problems become empirical rather than abstract. The motto he coins: swift to harbor, slow to berth.

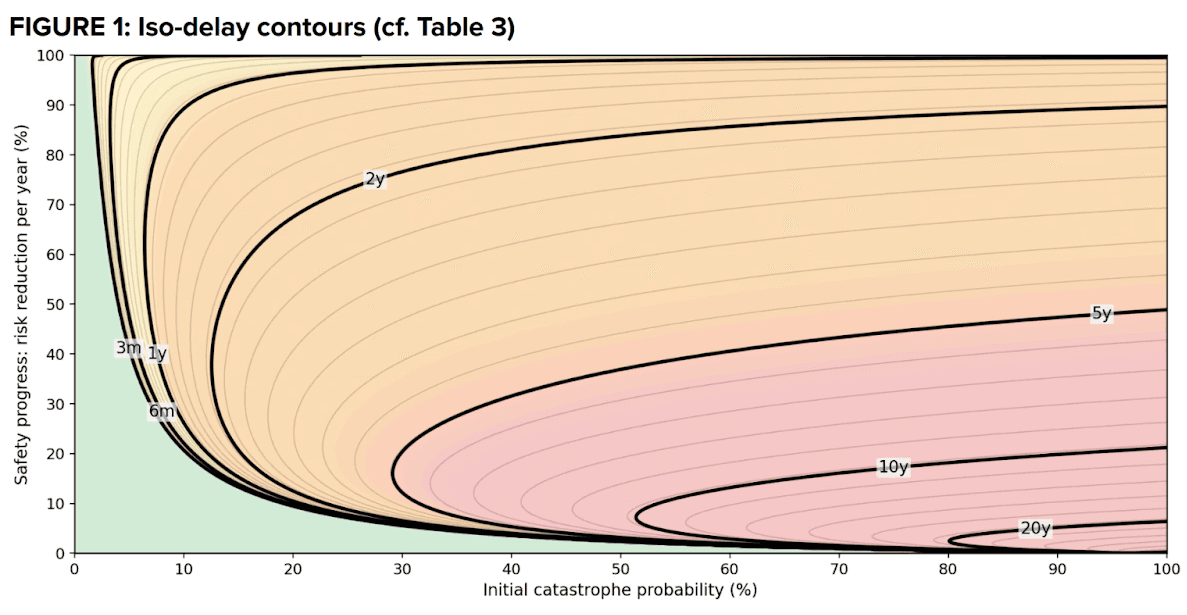

Figure 1: Iso-delay contours. Source: Bostrom (2026), nickbostrom.com/optimal.pdf

Who Benefits Most from an Earlier Transition to Superintelligence?

Bostrom does not treat optimal timing as universal. Older people, the seriously ill, and those living in precarious conditions have fewer expected years remaining. For them, the potential benefit of a rapid transition to superintelligence is far greater. Younger people with decades ahead can tolerate more waiting.

If you apply a prioritarian logic, giving greater weight to those who are worse off, the optimal timeline shifts forward. Bostrom also explicitly rejects the common assumption that beyond a certain age, additional life adds no value. That judgment, he argues, is rooted in our experience of current aging and deterioration. It does not account for a scenario of genuine rejuvenation, one of the central promises of a superintelligent future.

Institutional Risks: Why AI Governance Infrastructure Matters

In the final sections of his paper, Bostrom introduces critical institutional warnings. The most reasonable scenario, he suggests, is one in which the technological leader uses its advantage for safety. But he also flags the dangers of national moratoria, international prohibitions, and the competitive dynamics that arise when multiple actors race toward AGI under geopolitical pressure.

His analysis implicitly assumes an ecosystem where computational power tends to concentrate. In such an environment, the risks compound: militarization of compute resources, compute overhang (massive reserves ready to be activated under competitive pressure), and the perverse incentives of extreme centralization. These are not abstract concerns. The current trajectory of AI development, dominated by a handful of hyperscale cloud providers and corporate laboratories, creates precisely this concentration.

Implications for Qubic: Why Decentralized AI Infrastructure Reduces Existential Risk

If we take Bostrom’s framework seriously, the foundational question shifts from “when to launch AGI” to what kind of infrastructure reduces the risks associated with that launch. This is where Qubic’s architecture becomes directly relevant to the global conversation about superintelligence safety.

The Centralization Problem in Current AI Development

If superintelligence is built on centralized infrastructures, dependent on enormous data centers, opaque training pipelines, and corporate control, the risk profile expands beyond the purely technical. It becomes geopolitical. Concentration of compute makes the kind of adaptive governance Bostrom considers essential during the critical pre-deployment phase far more difficult. It also creates exactly the type of compute overhang he warns about: massive computational reserves ready to be activated at once under competitive pressure.

How Qubic’s Distributed Compute Architecture Addresses These Risks

Qubic dilutes that structural bottleneck. Its architecture distributes computational power across a global network rather than concentrating it in a single node. Qubic does not depend on an LLM-type architecture trained opaquely in mega data centers. Instead, it leverages Useful Proof of Work (uPoW), where miners contribute real computation to the training of its AI core, Aigarth, rather than solving arbitrary hash puzzles.

This design choice has direct implications for Bostrom’s analysis. A less centralized infrastructure reduces the probability of the abrupt, competitive deployment scenarios he warns against. Distributed compute means power is not located in a single facility that can be militarily captured, nor in a corporate laboratory under unilateral control. That structural resilience expands the space for Bostrom’s Phase 2: the strategic pause where real testing, incremental improvement, and adaptive governance can occur before full deployment.

For a deeper understanding of how Qubic’s approach to AI differs from mainstream models, explore Neuraxon: Qubic’s Big Leap Toward Living, Learning AI and the recent analysis That Static AI Is a Dead End. Google Confirms.. These posts illustrate how Qubic is building intelligence through a fundamentally different paradigm: one designed for continuous learning, distributed resilience, and real-world adaptation on a decentralized network.

Decentralized AI and Blockchain: Structural Alignment with AGI Safety

From Bostrom’s perspective, Qubic’s potential does not lie simply in being “decentralized” as a branding exercise. It lies in modifying the structural variables that determine optimal timing for superintelligence deployment. By distributing compute, by building consensus protocols that align miner incentives with genuine AI training, and by making the entire process open-source and auditable, Qubic creates the kind of infrastructure that makes the transition to AGI structurally safer.

If you’re interested in how Qubic’s CPU mining model and distributed compute network are evolving, the Dogecoin Mining on Qubic deep dive explains the latest expansion of Useful Proof of Work, and Qubic’s 2026 Vision details the broader infrastructure roadmap now underway.

The Hardest Problem: Building AGI That Learns from the World

Imagining utopian and dystopian scenarios is valuable. It is, in fact, the best path to creating futures aligned with human needs and values. But looking away, waiting aimlessly, or accelerating without restraint all fail to provide the necessary reflections.

Perhaps the most difficult challenge right now is not so much weighing the risk of accelerating the transition and modeling it. For now, the hardest task is building a general artificial intelligence capable of learning by itself from different dynamic environments, creating representations of the world, and acting within it. That is precisely the challenge Qubic’s Neuraxon framework is designed to address, not by training on static datasets behind closed doors, but by evolving in the open, learning from real-world complexity on a decentralized network anyone can participate in.

References and Sources

1. Bostrom, N. (2026). Optimal Timing for Superintelligence: Mundane Considerations for Existing People. Working paper, v1.0. https://nickbostrom.com/optimal.pdf

2. Bostrom, N. (2014). Superintelligence: Paths, Dangers, Strategies. Oxford University Press.

3. Bostrom, N. (2003). Astronomical Waste: The Opportunity Cost of Delayed Technological Development. Utilitas, 15(3), 308–314.

4. Yudkowsky, E. & Soares, N. (2025). If Anyone Builds It, Everyone Dies.

5. Hall, R. E. & Jones, C. I. (2007). The Value of Life and the Rise in Health Spending. Quarterly Journal of Economics, 122(1), 39–72.

6. Qubic Scientific Team. Neuraxon: Qubic’s Big Leap Toward Living, Learning AI. https://qubic.org/blog-detail/neuraxon-qubic-s-big-leap-toward-living-learning-ai

7. LessWrong community discussion: Optimal Timing for Superintelligence