QUBIC BLOG POST

Intelligence Is Not Scale: A Scientific Response to Jensen Huang's AGI Claim

Written by

Qubic Scientific Team

Published:

Apr 20, 2026

Listen to this blog post

“I think it’s now. I think we’ve achieved AGI.” Those were the words of Jensen Huang on the Lex Fridman podcast, sending shockwaves through the AI community and reigniting the most consequential debate in artificial intelligence: has artificial general intelligence been achieved?

But Nvidia’s CEO purposely evaded any kind of rigorous explanation, research, or debate about what AGI actually means. His definition of AGI was pure hype: an AI system that can build a company worth $1 billion. Just that. Most AGI definitions tend to refer to matching a vast range of human cognitive skills. For Jensen Huang, implicitly, intelligence equates with scale. With larger models, more parameters, more data, and more compute, systems will become more capable. Under this view, intelligence is a byproduct of quantitative expansion.

The Scaling Hypothesis: Why Bigger AI Models Don’t Mean Smarter AI

We assume this approach has produced undeniable advances. Large-scale models display impressive performance across a wide range of tasks, often surpassing human benchmarks in narrow domains (Bommasani et al., 2021). However, we have pinpointed several times this underlying assumption as fragile: increasing capacity won’t produce generality.

The limitation is not simply practical, but structural. Scaling improves performance within known distributions, but does not guarantee coherent behavior outside them (Lake et al., 2017). It amplifies what is already present; it does not reorganize the system. As IBM’s research has emphasized, today’s LLMs still struggle with fundamental reasoning tasks: they predict, but they do not truly understand.

As a result, these systems often exhibit a familiar pattern: strong local competence combined with global inconsistency. They can solve complex problems, yet fail in simple ones. They can generalize in some contexts, yet collapse in others. The issue is not lack of capability, but lack of integration. This is precisely why the AGI scaling debate in 2026 has intensified: computation is physical, and scaling has hit diminishing returns.

Google DeepMind’s Cognitive Framework for Measuring AGI Progress

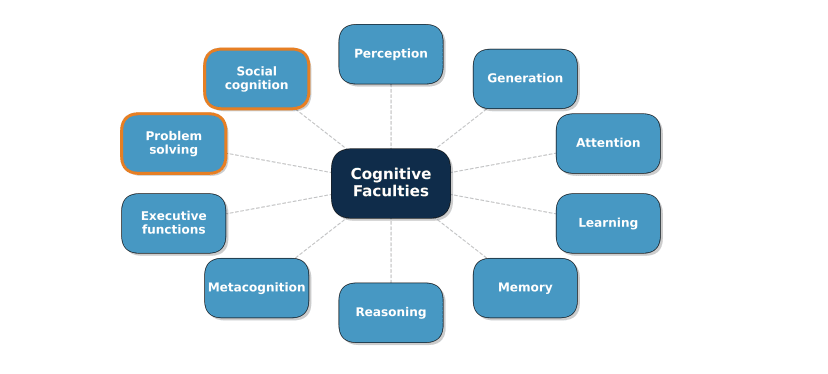

A second position, articulated in recent frameworks by Google DeepMind, defines intelligence as a multidimensional construct composed of cognitive faculties such as perception, memory, learning, reasoning, and metacognition. Much better…

Under this view, progress toward AGI can be measured by evaluating systems across a battery of tasks designed to probe each of these faculties (Burnell et al., 2026). But how are tasks designed? Are we training AI’s with the questions and answers they will face in the probes?

Source: Burnell, R. et al. (2026). Measuring Progress Toward AGI: A Cognitive Framework. Google DeepMind. View paper (PDF)

At least this approach acknowledges that intelligence is not a single scalar quantity, but a complex set of interacting abilities, grounded in decades of work in cognitive science (Carroll, 1993; Cattell, 1963).

Why Cognitive Profiles Alone Cannot Define Artificial General Intelligence

However, the limitation lies in how these faculties are treated. Although the framework recognizes their interaction, it ultimately evaluates them as separable components, building a “cognitive profile” of strengths and weaknesses.

This introduces a critical and surprising distortion.

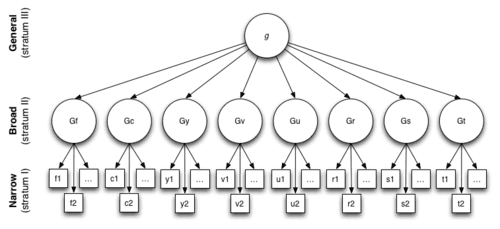

Because intelligence is not the sum of faculties. It is what emerges when those faculties are organized under a unified dynamic. In fact, the g factor, as we explained in our first scientific foundational paper, shows a clear hierarchy. Components organize in layers!

Source: Sanchez, J. & Vivancos, D. (2024). Qubic AGI Journey: Human and Artificial Intelligence: Toward an AGI with Aigarth. View paper on ResearchGate

A system can score highly across multiple domains and still fail to behave intelligently in a general sense. Not because it lacks capabilities, but because those capabilities are not coherently integrated. The DeepMind framework explicitly avoids specifying how these processes are implemented, focusing instead on what the system can do. This makes it useful as a benchmarking tool, but insufficient as a theory of intelligence. Somehow it seems AI companies forget what we know about intelligence for a century: what it is, how to measure it, which are the components, domains, and their interactions.

The Weakest Link Problem: Why Average AI Performance Hides Critical Failures

The key issue is that performance is being measured, but organization is not.

And this leads to a deeper problem: the weakness of a system lies in the weakest link of its chain. A system can perform well on average while still failing systematically in specific dimensions such as context maintenance or stability. These failures are not marginal. They define the system.

A system that reasons but cannot maintain context, that learns but cannot transfer, that generates but cannot validate, is not partially intelligent. It is structurally limited. And this limitation does not appear in averaged profiles, because averaging masks the point of failure.

In real intelligence, there is no tolerance for internal discontinuity. The moment one component fails to integrate with the others, behavior ceases to be general and becomes local (Kovacs & Conway, 2016).

This is precisely the pattern observed in current AI systems: highly developed capabilities that are weakly coupled. As explored in our deep comparison of biological and artificial neural networks, the gap between pattern recognition and genuine cognitive integration remains vast.

Qubic’s Approach: Intelligence as Adaptive Organization Under Uncertainty

For Qubic/Aigarth/Neuraxon, intelligence is not defined by the number of capabilities a system has, nor by how well it performs on predefined tasks, but by how it behaves when it does not already know what to do. Because that’s the epitome of intelligence: what you do when you don’t know what to do.

In this sense, intelligence is fundamentally an adaptive process under uncertainty (Bereiter, 1995). This view aligns with classical definitions, where intelligence is understood as the capacity to solve novel problems, build internal models, and act upon them (Goertzel & Pennachin, 2007). But it extends them by emphasizing the substrate in which these processes occur.

Biological Evidence: The G Factor, Brain Networks, and Cognitive Integration

From this perspective, intelligence emerges from the organization of the system, not from its components. Biological evidence supports this shift. The general intelligence factor (g) is not explained by isolated cognitive modules, but by the efficiency and integration of large-scale brain networks (Jung & Haier, 2007; Basten et al., 2015). Intelligence correlates more strongly with patterns of connectivity and coordinated activity than with the performance of individual regions.

Our research on the fruit fly connectome further reinforces this principle: even in the simplest complete brain map ever produced, intelligence begins with architecture. The connectome of Drosophila demonstrates that part of intelligence may reside in structure even before learning occurs.

Aigarth and Multi-Neuraxon: Brain-Inspired AI Architecture for True AGI

Architectures such as Aigarth and Multi-Neuraxon attempt to operationalize this idea. Instead of maximizing scale or enumerating capabilities, they focus on how multiple interacting units (Spheres, oscillatory channels, and dynamic gating mechanisms) can produce coherent behavior across contexts (Sanchez & Vivancos, 2024).

In these systems, intelligence is not predefined. It is not encoded in modules or evaluated as a checklist of abilities. It emerges from the interaction between components that are themselves adaptive, temporally structured, and mutually constrained. As we explore in the Neuraxon Intelligence Academy, these networks incorporate neuromodulation, multi-timescale plasticity, and astrocytic gating, principles drawn directly from neuroscience, to create systems with internal ecology rather than mere computational power.

Importantly, this approach directly addresses the problem ignored by the other two: integration. The question of AI consciousness vs. intelligence further illuminates this distinction: a system that integrates multiple scales, maintains dynamic stability, and evolves without losing coherence provides a far stronger foundation for general intelligence.

Conclusion: Why the AGI Debate Must Move Beyond Hype and Benchmarks

Because in an organized system, failure in one component propagates through the whole. That is why neither Jensen Huang’s economic definition nor DeepMind’s cognitive profiling captures the essence of artificial general intelligence. The path to AGI does not run through larger GPU clusters or longer checklists of cognitive abilities. It runs through the fundamental reorganization of how AI systems are built: from optimization to organization.

We must move from optimization (LLMs) to organization (Aigarth). We strongly believe this is one of the most relevant shifts in the future of artificial intelligence.

Scientific References

Basten, U., Hilger, K., & Fiebach, C. J. (2015). Where smart brains are different: A quantitative meta-analysis of functional and structural brain imaging studies on intelligence. Intelligence, 51, 10–27. https://doi.org/10.1016/j.intell.2015.04.009

Bereiter, C. (1995). A dispositional view of transfer. Teaching for Transfer: Fostering Generalization in Learning, 21–34.

Bommasani, R., Hudson, D. A., Adeli, E., et al. (2021). On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258. https://arxiv.org/abs/2108.07258

Burnell, R., Yamamori, Y., Firat, O., et al. (2026). Measuring Progress Toward AGI: A Cognitive Framework. Google DeepMind. View paper

Carroll, J. B. (1993). Human cognitive abilities: A survey of factor-analytic studies. Cambridge University Press. https://doi.org/10.1017/CBO9780511571312

Cattell, R. B. (1963). Theory of fluid and crystallized intelligence: A critical experiment. Journal of Educational Psychology, 54(1), 1–22.

Goertzel, B., & Pennachin, C. (2007). Artificial General Intelligence. Springer.

Jung, R. E., & Haier, R. J. (2007). The Parieto-Frontal Integration Theory (P-FIT) of intelligence. Behavioral and Brain Sciences, 30(2), 135–154. https://doi.org/10.1017/S0140525X07001185

Kovacs, K., & Conway, A. R. A. (2016). Process overlap theory: A unified account of the general factor of intelligence. Psychological Inquiry, 27(3), 151–177. https://doi.org/10.1080/1047840X.2016.1153946

Lake, B. M., Ullman, T. D., Tenenbaum, J. B., & Gershman, S. J. (2017). Building machines that learn and think like people. Behavioral and Brain Sciences, 40, e253. https://doi.org/10.1017/S0140525X16001837

Sanchez, J., & Vivancos, D. (2024). Qubic AGI Journey: Human and Artificial Intelligence: Toward an AGI with Aigarth. Preprint. View on ResearchGate