QUBIC BLOG POST

Neuraxon and the Next Phase of Machine Intelligence

Written by

Content

Published:

Listen to this blog post

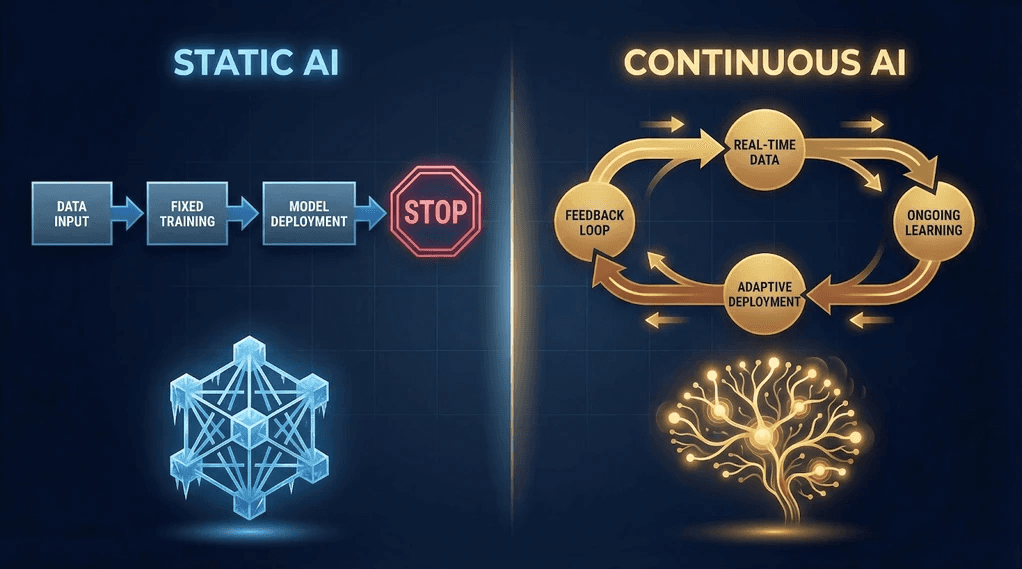

Today's machine learning finds surprising strength in understanding speech, spotting objects, and forecasting outcomes. Machines that learn now draft sentences, build code snippets, detect faces, help scientists analyze data. Still, even with such progress, today's systems cannot truly extend their own reach. Limits hide behind every clever trick they pull off. Learning does not happen here all the time. What they know stays fixed instead of growing on its own. Relearning takes place often because progress never just emerges.

Something different now shows up when old methods fail. These setups want to do more than just handle information - they aim to keep pace with life. Think about Neuraxon, often talked about, built around ongoing education instead of one-time learning.

What makes this significant begins with seeing how computation differs - some systems give results, others create understanding. That split matters more than it first appears.

The Core Limitation of Current AI Models

Neural networks shape most of today's smart AI tools, taught by massive data tweaks. Once learned, the system's settings do not shift. After that, it runs tests to make predictions. It guesses results using clues learned while being trained.

This setup handles jobs like sorting data, translating texts, or creating output pretty effectively. Still, it comes with tight built-in limits.

Learning Is Episodic

Once training wraps up, the model begins picking up patterns. Yet once rolled out, it shifts into a static mode - no more growth. Research published in Proceedings of the National Academy of Sciences established early on that neural networks trained sequentially on multiple tasks experience severe performance degradation - a phenomenon known as catastrophic forgetting.

Memory Acts Like a Mock-Up

What you see in a context window helps you remember things temporarily, yet it lacks actual experience behind it. A recent Nature Communications study highlighted that biological synapses balance memory retention and flexibility effortlessly, while artificial networks still struggle with the extremes of catastrophic forgetting and catastrophic rigidity.

Adaptation Requires Retraining

Fresh information needs to be added while the system is not connected. There is no mechanism for on-the-fly integration.

Understanding Shows Up in the Stats

Models show likely results instead of creating mental scenarios. They predict rather than comprehend.

Still, today's artificial intelligence does well when generating answers, yet stumbles with lasting thought patterns or building itself. Learning in living creatures does not stop after training ends. It keeps going. Google's own research team recently acknowledged this gap in their Nested Learning paper, confirming that current LLMs suffer from a form of digital amnesia - unable to form genuine new memories or integrate fresh experiences into their core being. For a deeper look at why static models face a dead end, read our analysis: That Static AI Is a Dead End. Google Confirms.

Intelligence as a Continuous Process

A shift happens in how minds process information compared to computers. Not trained, then left to run - brains adapt nonstop. Each thing seen reshapes what's stored inside. Over years, memory builds up slowly. When actions shift, they adjust on their own, never needing to erase what came before.

A shift like this points toward new ways machines process things. What happens is the setup stays constantly ready to learn. It never finishes training because progress never stops early. Inside it, work never pauses at all. Guessing things ties directly into building knowledge. What you've seen shifts how you'll decide next time. What sticks around doesn't need constant learning tweaks.

This idea sits under what people call stateful intelligence - a setup that grows, shapes things, moves forward on its own. Neuraxon fits within these types of methods.

What Neuraxon Proposes

From moment to moment, Neuraxon shapes its approach around changing conditions instead of guessing based on stable rules. Rather than training once and using the result later, the method refines itself while working through data, adapting quietly as new inputs arrive. The full theoretical foundation is laid out in the Neuraxon v2.0 research paper on ResearchGate.

What changes is how data gets seen - not as separate messages, but as moments lined up one after another in time.

That result pulls out three key features.

Learning Across Time Periods

Experience shapes how the system works, not just stored facts. When something new happens, actions change right away, no need to wait or redo training. This mirrors how biological neurons adapt through neuroplasticity and synaptic consolidation - protecting previously acquired knowledge while still integrating new learning.

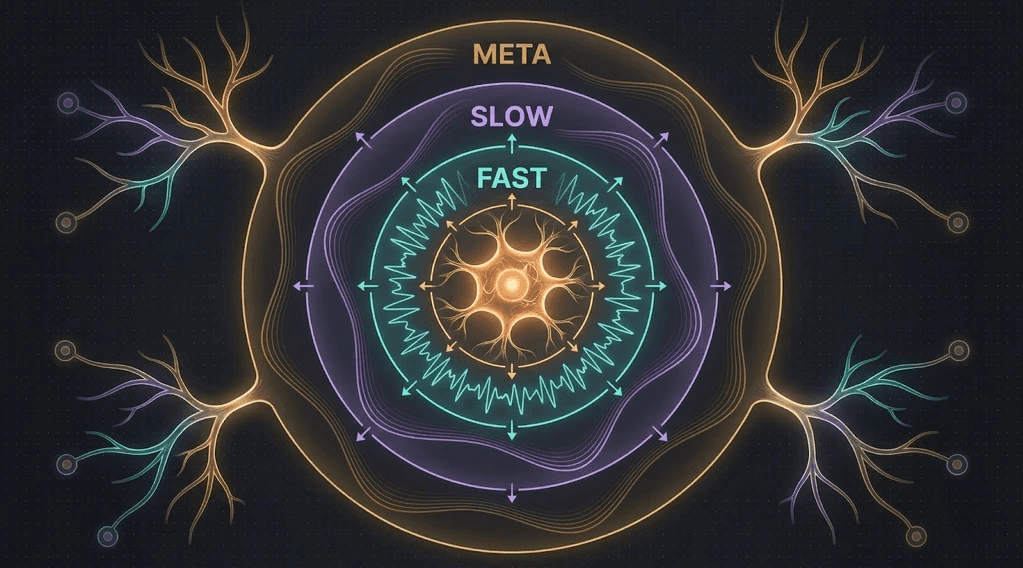

Persistent Internal State

What stays alive is how knowledge keeps shifting inside the system. After every exchange, it does not shut down or go back to zero. Neuraxon models this through multi-timescale synaptic dynamics using three dynamic weights - fast, slow, and metabotropic - that enable memory at different temporal scales. For a more detailed look at how these neuromodulatory mechanisms work, see our earlier post on Qubic's 2026 vision for decentralized AI.

Adaptive Reasoning

What something puts out ties both past learning and what it has done while running. What really happens is intelligence unfolds over time, not captured in one moment.

Neuraxon vs. Transformer-Based Systems

What makes transformer systems stand out in today's AI landscape is how they handle growth and adapt to new problems without slowing down. Still, when running predictions, their design remains fixed forward-style by construction.

They read context, compute, and produce output.

Though they feel natural, every reply gets reworked from words pulled within a tight frame. What seems like recall is actually rebuilding. The Nested Learning research from Google describes current transformers as having only two levels of parameter updates - short-term context and fixed long-term weights - while biological brains operate across a continuous spectrum of memory timescales.

What sets Neuraxon apart has real consequences - general intelligence probably depends on continuity. Without true past experiences to build on, an agent can't reason well when settings shift over time.

Learning never stops, so computation must keep going too.

Why Continuous Intelligence Demands Continuous Computation

Once a system starts changing its own state nonstop, computing stops being something that happens now and then. It keeps going without pause. Thinking links directly to constant activity instead of infrequent learning sessions.

Regular AI setups just aren't built for this. Most machine learning pipelines expect huge spikes of training power - then nothing much happens for a while, just waiting. Now and then, some updates kick in.

Learning never stops if the machine must think without pause, staying active for months or years. Nowhere is the change more clear than how intelligence stops chasing data, instead becoming a matter of building solid systems.

The Infrastructure Challenge for Persistent AI

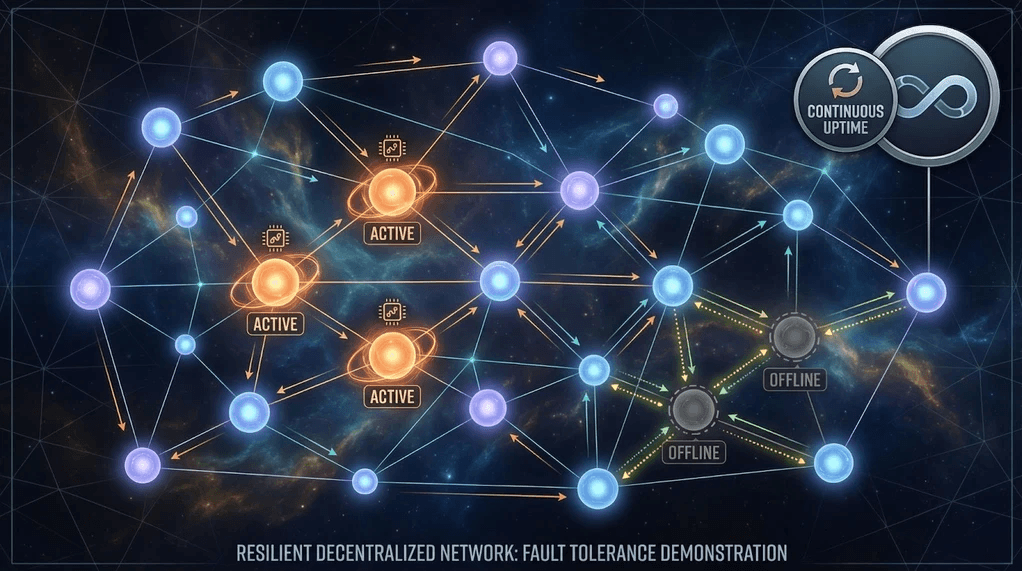

What changes with persistent AI? It needs to keep running without pause.

That brings up a few problems. The system must keep going even when hardware fails. As skills build up, so should the tools used to support them. Running without pause isn't funded by one check - that kind of funding doesn't last forever.

With centralized data centers, getting compute resources often follows a schedule based on workloads - not ongoing thinking. Pricing follows that rhythm too. For ongoing changes in how intelligence develops, the processing base should act more like a mesh instead of just grouped machines. Less like a tool used once, more like a living system that never stops.

Here's where peer-driven computing starts making sense. To understand how Qubic reimagines this compute model, read What Is UPoW and Why It Matters.

Where Decentralized Compute Becomes Relevant

When one part stops, others keep going - no piece needs to stay on at all times. As nodes drop in or out, the whole system just moves forward.

This setup acts much like living systems do. Parts shift over time, yet the whole keeps functioning. What makes this work well in ongoing AI systems? It runs without stopping, using a shared memory space that keeps everything alive. No need to restart - progress flows smoothly across tasks.

Qubic's Useful Proof of Work model offers a practical framework for this kind of infrastructure. Instead of wasting compute cycles on arbitrary puzzles, mining power gets redirected toward real-world AI training tasks - the exact kind of persistent computation that architectures like Neuraxon require.

The Role of Continuous Computation Networks

Rather than channeling computation just for code safety, Qubic suggests shifting heavy thinking into real-world uses. A steady flow of artificial intelligence activity unfolds over a spread-out network structure. There, computing effort serves not only security but growth - of an adaptive knowledge framework.

Nodes do real number crunching. Over time, state shifts and changes. That computation feeds into growing intelligence. For a deeper dive into how this economic model works and why it outperforms centralized alternatives, see Qubic's Useful Proof of Work: The Future of AI Compute.

What matters here does not depend on one particular setup. It stands for infrastructure built to run learning loops without pause - the kind Neuraxon outlines.

What This Means for the Future of AI

At the start of modern AI, focus landed on getting things to work - building versions that actually did their job without fuss. What follows pushes forward by keeping things linked, shaping tools that grow knowledge slowly.

Without ongoing practice, smarts don't come just from learning sets. A setup needs to be alive, reach out, adapt over time. One thing sits at the center - a setup that shifts and changes over time must exist. Just as vital, the system running things needs to keep going without shutting down. Both pieces matter equally.

Neuraxon tackles structure first. Continuous compute networks handle infrastructure needs instead. Together, these ideas point toward another way to build more sophisticated machine learning abilities - keeping intelligence alive rather than making it from scratch. Instead of producing it now and then, the system stays active over time. Qubic's broader Aigarth initiative represents this vision in action, combining evolutionary AI development with decentralized compute.

From Fixed Models to Dynamic Processes

Back then, people saw AI only as regular software. It got trained, put live, then swapped out after time passed. What shifts when learning runs constantly? Instead of waiting for answers, thinking keeps happening. Systems think, adapt, evolve - not just react.

This lines up with how living brains work. Not built piece by piece. They function. When research shifts toward general intelligence, the line fades between computing and being. Machines need to do more than solve problems - they also gather memories along the way.

Systems like Neuraxon show what goes on inside these setups. Compute networks that spread across servers explore their chances of lasting beyond collapse. Oracle Machines further extend this by connecting smart contracts to real-world data feeds, adding external context that persistent AI systems need to reason about the world beyond their own network.

Final Thoughts

Shoulder checks won't kick AI into gear - it's steady learning that might push things forward. When machines pick up tricks while running, they start acting less like helpers and more like active players.

Neuraxon treats intelligence like something growing, not just sitting there doing one job. Making space for these ideas means building systems meant to last, built for endurance over busy workloads.

What matters most isn't whether a particular version works - it's the change happening behind the scenes. Instead of chasing faster results, work on keeping things alive. Now it's not just about how strong models get - it's how long they actually stay breathing.

Explore Neuraxon firsthand through the open-source codebase on GitHub or try the interactive demo on Hugging Face.

Qubic is a decentralized, open-source network for experimental technology. Nothing on this site should be construed as investment, legal, or financial advice. Qubic does not offer securities, and participation in the network may involve risks. Users are responsible for complying with local regulations. Please consult legal and financial professionals before engaging with the platform.