QUBIC BLOG POST

Understanding LLMs: How Large Language Models Power Intelligent Systems

Written by

Content

Published:

Listen to this blog post

Artificial intelligence is advancing fast, and large language models (LLMs) sit at the center of that progress. These systems have changed how machines understand and generate human language, powering everything from chatbots and search engines to code generation and scientific research.

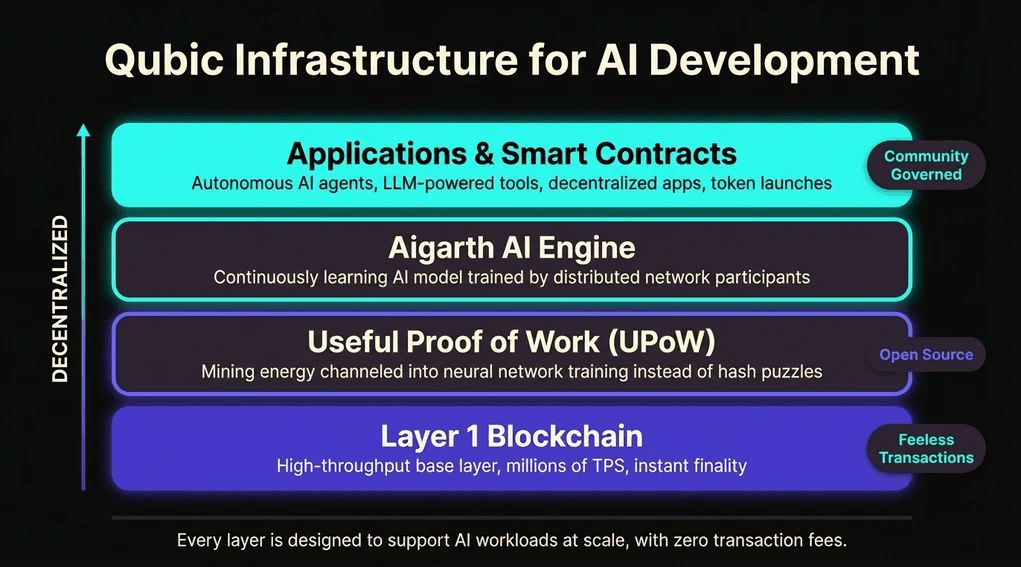

At Qubic, the focus goes beyond simply watching this evolution unfold. Qubic is actively building infrastructure that supports AI development at its foundation, shaping intelligent systems that grow steadily closer to artificial general intelligence (AGI).

This guide explains what LLMs are, how they learn, where their limitations lie, and how Qubic’s AI and blockchain architecture enables a new model of decentralized AI development using useful computation.

What is a Large Language Model (LLM)?

A large language model is an AI system built on deep learning algorithms, trained on vast quantities of written text. These models process billions, sometimes trillions, of word examples drawn from books, articles, websites, and conversation logs. Through this extensive exposure, they learn grammar, sentence structure, contextual meaning, and the statistical relationships between words.

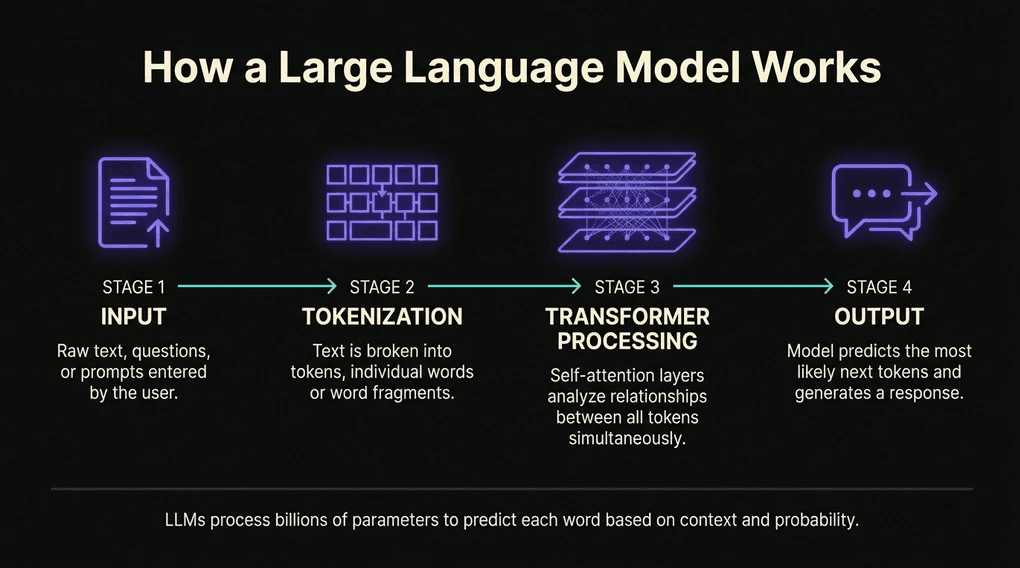

At their core, LLMs work by predicting what word comes next in a sequence. What happens earlier in the text shapes what the model generates next. Scaling this prediction task to enormous datasets unlocks a wide range of capabilities, according to IBM’s LLM overview:

Answering complex questions with contextual awareness

Translating between languages with high accuracy

Writing articles, stories, and technical documentation

Sustaining coherent, multi-turn conversations

Generating and debugging code across programming languages

What sets LLMs apart from earlier AI systems is how they store and access information. Rather than relying on hard-coded databases, these models carry knowledge through patterns in data. Words connect to ideas based on frequency and co-occurrence, learned by observing massive amounts of text. This structure allows LLMs to make connections across broad areas of understanding, and even perform tasks they were never explicitly trained on.

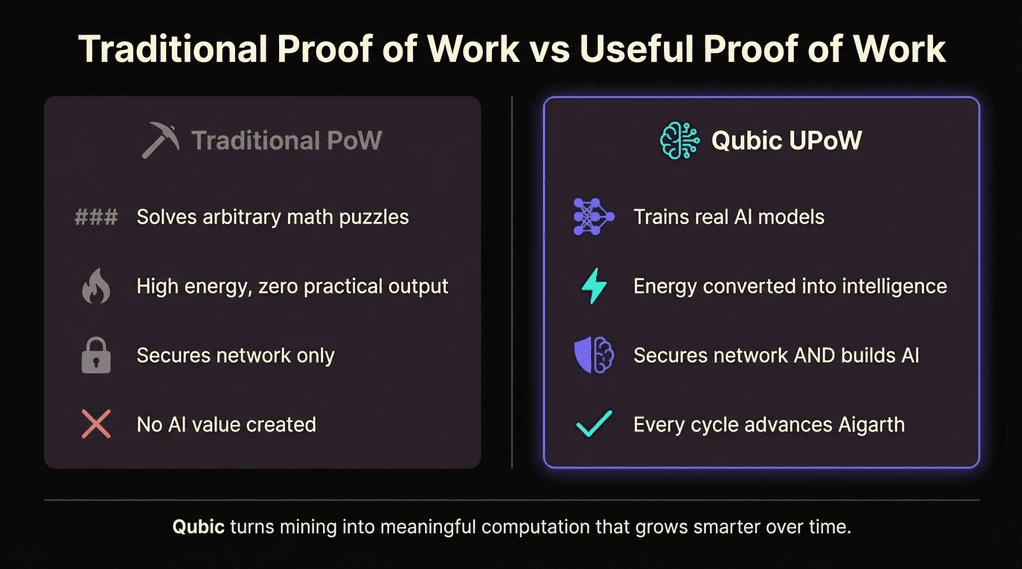

At Qubic, large language models are treated as building blocks of something much larger. Instead of traditional Proof of Work calculations, Qubic’s Useful Proof of Work (UPoW) design channels computational power toward training neural networks, so every participating device contributes to building smarter AI systems rather than solving arbitrary cryptographic puzzles.

How Large Language Models Learn and Operate

LLMs are built on deep neural networks and learn through a process called unsupervised training. Massive text datasets feed the model’s growth, shaping how it processes language. Through repeated cycles of prediction, filling in blanks and forecasting what comes next, the system adjusts its internal parameters. Over time, precision rises alongside a deeper awareness of context.

The Transformer Architecture

Modern large language models run on the transformer architecture, introduced in a landmark 2017 paper titled Attention Is All You Need. Transformers use a mechanism called self-attention to identify important relationships between words within a sequence, regardless of how far apart they appear in the text. This was a major breakthrough over earlier approaches like recurrent neural networks (RNNs), which processed words sequentially and struggled with long-range dependencies.

The transformer model also allows for parallel processing of entire sequences at once, making training dramatically faster and more scalable. According to AWS’s technical explainer, this parallelization enabled researchers to train LLMs on datasets of unprecedented size, producing the emergent behaviors like few-shot learning and compositional reasoning that define today’s most capable models.

Key Capabilities Enabled by Transformers

Because of the self-attention mechanism, modern LLMs can:

Maintain context across extended conversations

Understand nuanced, ambiguous language

Reason through multi-step problems

Adapt tone and style to fit different contexts

Transfer learning from one domain to another

From Word Prediction to Deeper Understanding

Beyond just predicting the next word, Qubic’s AI model Aigarth pushes toward something more ambitious. Understanding language is valuable, but genuine intelligence means solving novel problems, storing and retrieving knowledge, forming original ideas, connecting unrelated concepts, and adapting behavior over time. That broader vision, rooted in first principles reasoning, shapes how Aigarth is built.

The Qubic Advantage: Useful Computation for AI Training

What sets Qubic apart from most blockchain platforms? Its computations serve a real purpose. Instead of burning processing power on standard puzzle-solving, the network channels that energy into training neural networks through Useful Proof of Work (UPoW). This is what makes Qubic one of the most distinctive AI blockchain projects in development today.

The shift creates a clear cause-and-effect chain:

Distributed compute: Processing power comes from many sources spread across the network

AI training: That power feeds directly into training neural network models

Network security: As intelligence grows, the network strengthens its own security

Continuous learning: Epoch after epoch, models improve without manual retraining

In traditional blockchains, mining produces cryptographic hashes that secure the network but generate no additional value. Qubic’s approach transforms CPU mining into a shared computing grid for artificial intelligence. Over time, this setup trains increasingly capable neural networks, ones able to handle questions, understand language, make decisions, and adapt their reasoning.

Instead of treating AI and blockchain consensus as separate concerns, Qubic weaves them together within a single framework. You can explore the technical details on the Qubic technology overview page.

How LLMs Compare: A Technical Overview

Not all large language models are created equal. They differ in architecture, training methodology, parameter count, and intended use. Here is how several prominent LLMs compare:

Model | Developer | Architecture | Parameters | Key Strength |

GPT-4o | OpenAI | Decoder-only transformer | Undisclosed | Multimodal reasoning |

Claude 3.5 | Anthropic | Transformer | Undisclosed | Safety, long context |

Gemini 2.0 | Google DeepMind | Multimodal transformer | Undisclosed | Native multimodality |

DeepSeek R1 | DeepSeek | MoE transformer | 671B | Open-weight reasoning |

Llama 3 | Meta | Decoder-only transformer | Up to 405B | Open-source flexibility |

Aigarth | Qubic | Decentralized neural net | Evolving | Continuous decentralized learning |

What distinguishes Aigarth from the models above is its training methodology. While every other model on this list was trained on centralized infrastructure using fixed datasets, Aigarth learns continuously through distributed compute contributed by participants across the Qubic network. The model never stops training, and its development is governed by the community rather than a single corporation.

LLMs and the Path Toward Artificial General Intelligence

Large language models demonstrate impressive language abilities. Still, they operate within clear limits. Their knowledge degrades quickly outside training domains. They lack persistent memory or genuine connection to physical reality. Their reasoning remains confined to patterns extracted from text, not the flexible, goal-directed thinking that humans use naturally.

Artificial general intelligence (AGI) would look fundamentally different. An AGI system learns rapidly, reasons across domains, adapts to new situations, and handles diverse tasks without needing separate training for each one. For a deeper exploration of these distinctions, see our companion guide: What is AGI? Artificial General Intelligence Explained.

With that goal in sight, Aigarth is being designed around deeper ambitions. LLM capabilities form part of the picture, but they sit alongside other components within a broader cognitive framework meant to:

Reason through layered, multi-step ideas

Learn continuously from experience without manual retraining

Generalize knowledge across unrelated domains

Adapt behavior dynamically based on new information

Capability | LLMs (Current) | AGI (Target) |

Learning | Fixed training on static datasets | Continuous, self-directed learning |

Memory | Context window only, no persistence | Long-term memory with identity |

Reasoning | Pattern-matching within training data | First principles reasoning across domains |

Adaptation | Requires fine-tuning by engineers | Self-modifying behavior |

Goals | Follows user prompts | Forms and pursues own objectives |

Qubic’s infrastructure supports this ambition with a high-performance Layer 1 blockchain capable of handling millions of transactions per second during testing, feeless operations, and instant finality. These properties allow complex AI coordination at scale, free from cost barriers. The project’s stated target: reaching AGI capabilities by 2027 through open-source development and shared compute power across global networks.

The Role of the Qubic Community in AI Development

Qubic runs on shared effort. Computors across the network validate the system’s operations while simultaneously feeding into how AI learns and evolves. Intelligence growth in Qubic happens not in one centralized lab but spreads across many locations worldwide.

Because control is distributed, systems stay open to scrutiny and resilient under pressure. Progress becomes something the community shapes together, not locked behind corporate doors.

Qubic’s governance operates through the Quorum Protocol, where decisions about resources, computing tasks, and feature development emerge through group consensus rather than top-down orders. Community members participate through AMAs, proposals, and joint development work, all shaping how the blockchain evolves alongside its AI capabilities.

Join the conversation in Qubic’s Discord community to get involved in governance discussions, research collaboration, and development efforts.

Building with Qubic for AI and LLM Applications

Built for developers creating intelligent applications, Qubic offers infrastructure designed for handling massive AI workloads. The developer documentation covers everything needed to start building on this platform.

Key infrastructure features include:

Feeless transactions: Frictionless data exchange without gas costs slowing down AI operations

Instant finality: Real-time coordination for AI agents and model synchronization

High throughput: Compute-intensive workloads run smoothly across the distributed network

Open-source transparency: Clear development processes with updates visible to everyone

This setup enables autonomous AI learning, self-managed bots, and tools powered by large language models, all operating without dependence on centralized cloud infrastructure.

Qubic also supports a new approach to token launches through smart contracts, giving developers support for building essential software components while rewarding effort across every layer of the network. Whether built on top of existing smart contract structures or woven into open-source networks, Qubic forms the base layer for adaptable AI blockchain projects and decentralized ecosystems.

The Future of LLMs and Intelligence on Qubic

Large language models have already shifted the landscape of artificial intelligence. But at Qubic, they represent a starting point, not the destination. LLMs serve as foundations for building smarter structures capable of reasoning, adapting, and growing across diverse contexts.

Qubic’s Useful Proof of Work model ensures the network doesn’t just process transactions. It continuously runs tasks that feed into learning systems. Each computational effort adds strength not only to the network itself but also to the artificial intelligence being developed within it.

As AI capability grows, decentralized networks help maintain transparency, alignment with human goals, and resilience against failure. Inside Qubic, the design ensures that knowledge moves forward in ways that are shared widely, linked openly, and visible to all participants.

A quiet shift is underway. Where artificial intelligence meets distributed ledger technology, the groundwork is being laid for something lasting. Stay current with Qubic’s AI progress on the Qubic Blog, and explore foundational concepts through the Qubic Academy.

Frequently Asked Questions About LLMs and Qubic

What is a large language model (LLM)?

A large language model is a type of artificial intelligence trained on massive amounts of text data. These systems use deep learning and transformer architecture to predict what words come next in a sequence, enabling them to generate text, translate languages, summarize documents, answer questions, and engage in human-like conversations.

How does Qubic contribute to LLM development?

Qubic channels computational effort toward training neural networks through its Useful Proof of Work design. This effort feeds into Aigarth, Qubic’s AI model designed to advance beyond basic language processing toward broader cognitive capabilities, including natural language understanding at scale.

Are LLMs the same as artificial general intelligence?

No. LLMs are specialized tools focused on processing and generating human language. Artificial general intelligence (AGI) aims to understand, learn, and solve problems across all cognitive domains. While LLMs form important building blocks, they fall short of the flexible, self-directed reasoning that AGI would require. Learn more in our guide: What is AGI?

What makes Qubic’s AI infrastructure unique?

Qubic integrates AI training directly into its blockchain consensus mechanism. Rather than separating computation for security from computation for intelligence, the network merges them into one shared process. This means every mining operation simultaneously secures the network and trains AI models.

Why do feeless transactions matter for AI systems?

Without transaction fees, data moves more smoothly across the network. Models adjust faster, processes link together more efficiently, and complex AI operations can run for extended periods without cost barriers accumulating. This is especially critical for applications involving continuous learning and real-time AI coordination.

How do LLMs differ from traditional search engines?

Traditional search engines match keywords against indexed web pages. LLMs go further by understanding context, nuance, and intent behind queries. According to Cloudflare’s technical guide, this ability to capture deeper meaning is what makes LLMs a major step forward in natural language processing.

Where can I learn more about Qubic’s AI development?

Visit the Qubic Academy for structured learning resources. Follow ongoing updates about Aigarth, AI research, and network development on the Qubic Blog. For real-time discussion, join the Qubic Discord community.

Final Thoughts: Why Understanding LLMs Matters Now

Large language models represent one of the most significant breakthroughs in modern AI, but understanding their mechanics also reveals their limitations. These systems are powerful pattern recognizers, not general thinkers. They predict text brilliantly but don’t understand the world the way humans do.

That gap is exactly what Qubic is working to close. By turning blockchain infrastructure into a decentralized AI training engine, Qubic creates an environment where intelligence grows continuously, transparently, and under shared governance. LLMs are the starting point. The destination is something far more capable.

The path from large language models to artificial general intelligence is neither short nor guaranteed. But the infrastructure being built today will determine whether that path leads somewhere meaningful. Explore Qubic’s approach to decentralized AI →

Qubic is a decentralized, open-source network for experimental technology. Nothing on this site should be construed as investment, legal, or financial advice. Qubic does not offer securities, and participation in the network may involve risks. Users are responsible for complying with local regulations. Please consult legal and financial professionals before engaging with the platform.