QUBIC BLOG POST

Neuraxon: Building Brain-Inspired AI the Way Biological Neural Networks Actually Work

Written by

Content

Published:

Listen to this blog post

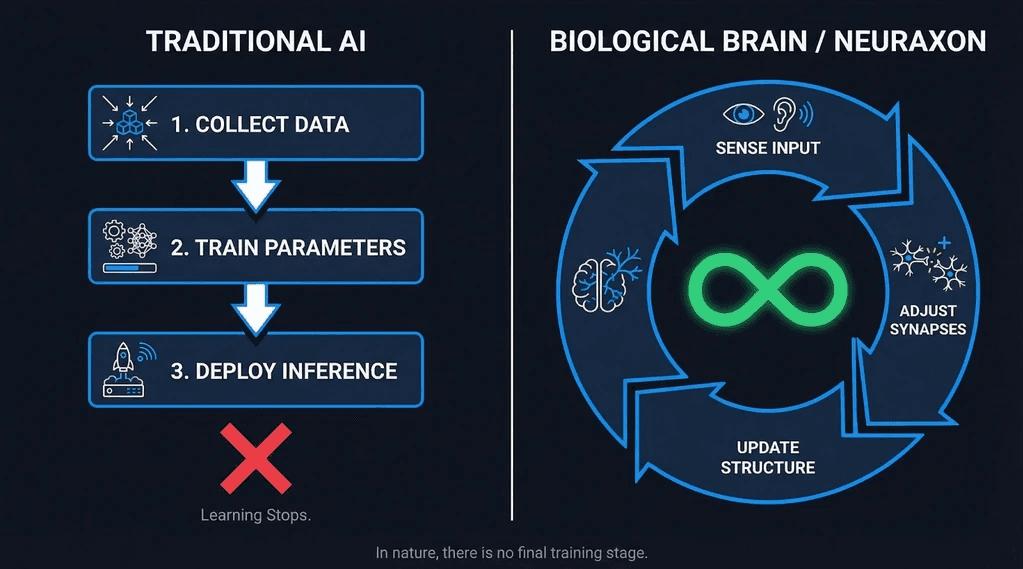

Today's machines write essays, generate code, and hold conversations. But beneath the surface, what looks like thought runs on a fundamentally different logic -- pattern matching at massive scale, not genuine continuous learning. Traditional AI freezes the moment training ends. It never truly grows.

What happens inside living brains is nothing like that. In biological systems, knowledge is not acquired once and then deployed forever. It reshapes itself continuously, in real time, with every new experience. That core difference -- how intelligence persists and adapts -- is exactly what Neuraxon is built around.

The Brain Is Not a Model - And Traditional AI Is

In conventional machine learning, intelligence is manufactured in three discrete stages:

Collect training data

Optimize model parameters

Deploy for inference

The moment training ends, the system stops incorporating new information entirely. It replays learned patterns without modification, a process that neuroscientists would never recognize as intelligence.

Living brains operate by a fundamentally different rule: learning never stops. From birth through death, sensory inputs continuously reshape neural pathways. What we know is not stored in a table -- it lives inside the physical structure of how neurons are connected. This is the basis of synaptic plasticity, the neurological mechanism that makes memory a dynamic, structural process rather than a static record.

Neuraxon's foundational premise is rooted here: intelligence must be able to shift and grow from experience, not only from scheduled updates.

Neurons, Not Tokens: How Neuraxon Handles Memory Differently

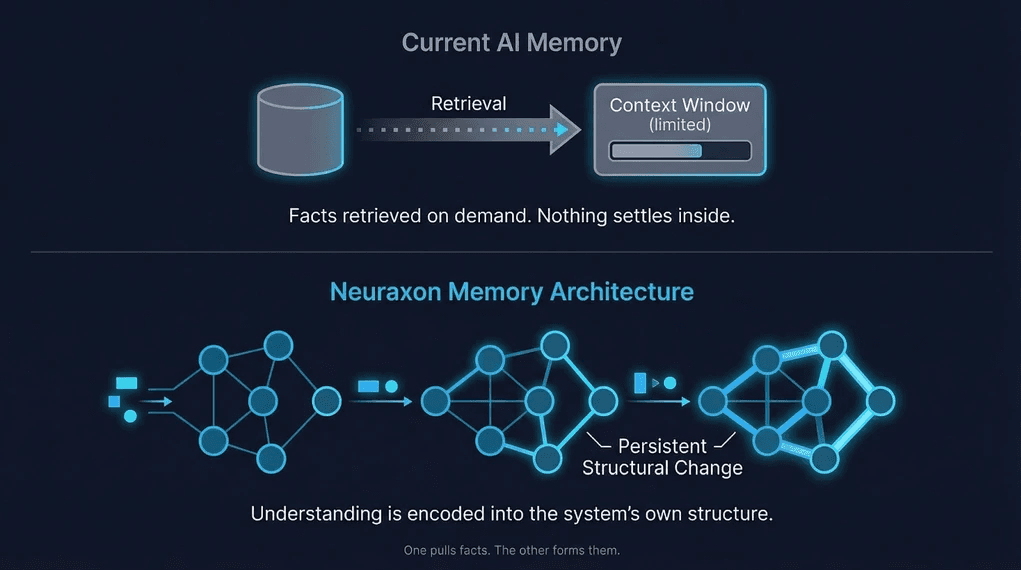

Standard large language models process input sequentially, token by token, within a context window that resets entirely after each session. Once a conversation ends, everything is gone. There is no accumulation -- only recall of what fits in the current buffer.

Biological memory does not work this way. When neurons fire together repeatedly, the synaptic connections between them strengthen -- a principle known as Hebbian learning, often summarized as "neurons that fire together, wire together." Behavior emerges from actual structural history, not from cached context.

Neuraxon applies this same logic. Rather than resetting after each interaction, the system retains what has happened -- not as stored text, but as shifts in how it structures understanding. These changes accumulate quietly, becoming part of its internal architecture. In a biologically meaningful sense, the system builds memories rather than merely accessing them.

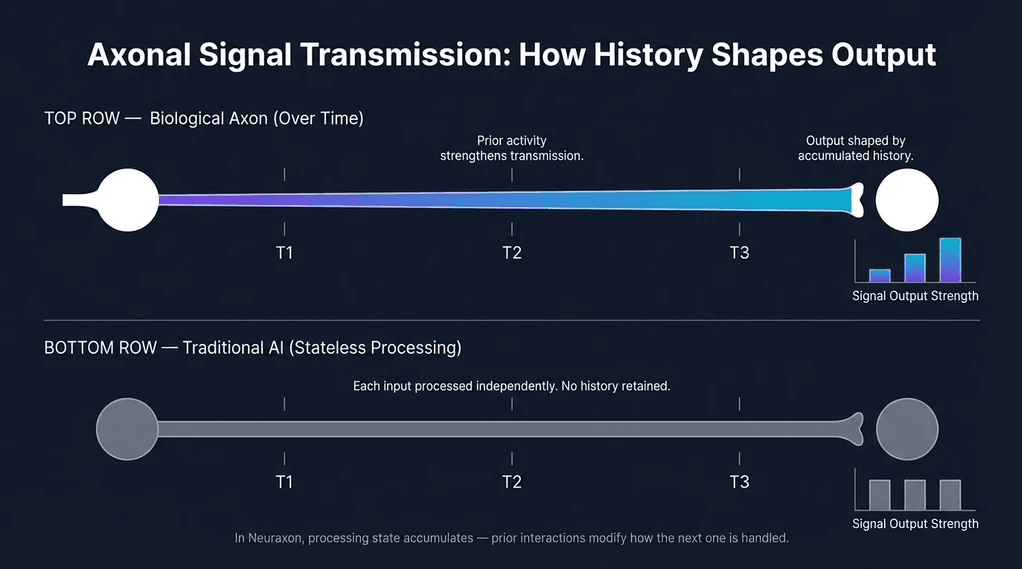

The Role of the Axon in Signal-Dependent Learning

In neuroscience, the axon is the transmission cable of a neuron -- but it is far more than a passive pipe. It controls the timing, strength, and destination of signals. How information flows through the axon directly shapes what gets learned, not just what gets transmitted.

Neuraxon draws from this concept architecturally. Rather than treating processing layers as independent, the system models how signals propagate across time. Prior activations influence subsequent computations, so that results accumulate based on history, not solely on the present input. This is closely aligned with what researchers describe as Long-Term Potentiation (LTP) -- the mechanism by which repeated stimulation permanently strengthens synaptic pathways.

Experience Instead of Retraining: The Case for Lifelong Learning AI

Conventional AI development requires periodic retraining. New data arrives, engineers retune weights, and the updated model gets redeployed. Each retraining cycle is a reset -- a kind of amnesia with improvement.

Children do not relearn language from scratch each time they hear a new word. Each new input builds on the last, and understanding deepens continuously without interruption. This is what researchers refer to as lifelong learning -- a property that Intel's neuromorphic research program has specifically cited as a core goal for next-generation AI architectures.

In Neuraxon's model, inference is learning. The two are not separated. Each input leaves a trace; each interaction adjusts the structure that processes the next one.

Why Persistent AI Memory Changes Everything

True understanding requires durable connections. When those connections degrade or reset, what remains is sophisticated approximation -- not knowledge.

Today's AI simulates memory through limited input buffers and external vector databases. Facts can be retrieved on demand, but nothing truly settles inside the system's structure. The difference between retrieving stored facts and forming internalized understanding is the same difference that separates a glossary from genuine comprehension.

This gap has been documented extensively in research on neuromorphic computing architectures -- where the goal of in-memory computing and persistent synaptic state is considered foundational to building systems that do not just perform tasks but actually learn from them.

Neuraxon's approach targets this gap directly: memory is encoded into the architecture, not bolted on as an external retrieval layer.

A Brain Needs Metabolism -- Why Continuous Computation Matters

The brain never fully powers down. Even at rest, neurons remain active. Thought persists because underlying activity persists. Any system aspiring to brain-like intelligence must, at some level, mirror this continuous computational metabolism.

This creates a genuine infrastructure challenge. Conventional computers are architected for discrete, finite tasks -- a job starts, runs, and ends. Intelligence that continuously updates its own structure needs a substrate that does not stop between tasks.

Some distributed computing networks are beginning to address this. Qubic's network is designed to keep computations running continuously rather than processing isolated, one-off workloads. Learn more about Qubic's Useful Proof of Work architecture -- a model that aligns naturally with the demands of always-on AI systems that need a persistent environment to learn within.

This alignment goes deeper than infrastructure. When intelligence is continuously changing, it needs a computational base that does not treat each interaction as a clean-slate transaction.

From Software to Organism: Rethinking What AI Is

Programs run and terminate. Organisms develop and persist. What separates them is not capability -- it is duration and the capacity to change from within.

When AI encounters new situations in Neuraxon's model, its structure evolves. There are no version numbers, no reinstallation cycles. The system uses development instead of deployment. This is the distinction that researchers at Nature Communications describe as the defining characteristic of commercial-grade neuromorphic systems: continuous real-time operation as a native property, not an add-on.

Implications for the Future of AI Development

If learning is driven by lived experience rather than data exposure alone, then simply scaling up model size will have diminishing returns for producing genuine intelligence. Bigger networks guess better -- but they still do not know why.

What persists shapes what follows. In a system with true structural memory, every prior experience influences the next. This sensitivity to history is what makes biological cognition so powerful and so difficult to replicate through conventional deep learning architectures.

Neuraxon represents one approach to closing that gap: not by mimicking neurons at the cellular level, but by applying the organizing principles of biological cognition - growth, persistence, and structural adaptation - to the design of AI systems.

Conclusion: When AI Starts Feeling Like a Mind

The question is no longer whether machines can perform. They clearly can. The more interesting question is whether they can genuinely learn -- whether what happens during an interaction leaves a lasting structural trace that shapes every future interaction.

That is what Neuraxon is attempting: building artificial intelligence that grows the way biological intelligence grows. Not by copying neurons, but by applying the principle that knowledge is architecture -- that understanding changes the structure of the thing that understands.

When AI learns without restarting, artificial systems begin to feel less like tools and more like minds. Neuraxon is one project exploring what that could actually look like -- and the infrastructure that would make it possible.

Qubic is a decentralized, open-source network for experimental technology. Nothing on this site should be construed as investment, legal, or financial advice. Qubic does not offer securities, and participation in the network may involve risks. Users are responsible for complying with local regulations. Please consult legal and financial professionals before engaging with the platform.